Text | Kaori

Editor | Sleepy.md

The conflict between OpenAI and Anthropic will not stop, because it cannot.

Anthropic needs an insufficiently safe rival to prove the necessity of a safety narrative, while OpenAI requires a hypocritical adversary to justify its open narrative. This structure determines that the existence of the other party is the best advertising material for itself, with every round of attack reinforcing the motivation for the next round of attack.

Both companies are simultaneously sprinting for their IPO window, and this mutual fighting serves a more practical function: to define their valuation logic in the minds of investors ahead of time. Whoever has the more legitimate narrative will secure a higher valuation.

In early April, an internal letter from OpenAI's Chief Revenue Officer Denise Dresser to all employees is actually a product of this logic. Because this internal letter was not meant for the employees.

Dresser dedicated an entire chapter to systematically attacking Anthropic: the brand narrative is built on fear, restrictions, and the idea that a few elites should control AI; a strategic miscalculation in computing power has already led to product limitations and a decline in user experience; Anthropic's publicly declared annual revenue of $30 billion is overestimated by about $8 billion, because it accounts for the total amount paid to Amazon and Google as revenue, while OpenAI accounts for its revenue-sharing with Microsoft on a net basis.

It is almost unprecedented in the tech industry for a company's revenue officer to dedicate a section of an internal letter to dismantle a competitor's accounting practices.

$8 billion accounting allegation

This letter reached reporters from Bloomberg and The Verge within a day. The week before, OpenAI had also sent a separate memo to investors, stating that Anthropic operates on a lower growth trend.

The $8 billion allegation hinges on an accounting term.

When Anthropic distributes Claude through AWS and Google Cloud, all the amounts paid by customers to the cloud platform are counted as Anthropic's revenue, while the commission paid to the platform providers is counted as a cost, which is the gross method. OpenAI's revenue-sharing with Microsoft uses the net method, only considering what it actually receives.

Both accounting methods are legal under US Generally Accepted Accounting Principles. Anthropic's argument is that it acts as the principal in the transaction, with the cloud platform merely serving as a distribution channel. OpenAI's rebuttal is that the net method is the standard that publicly traded companies are required to follow.

Both sides have valid points, but having a valid argument is not the focus; the focus is on the outcome.

If recast on a net basis, Anthropic's comparable revenue drops from $30 billion to $22 billion, which is just shy of OpenAI's self-reported $25 billion.

In the IPO sprint window for both companies, whose revenue numbers are larger directly determines their ranking in investors' minds.

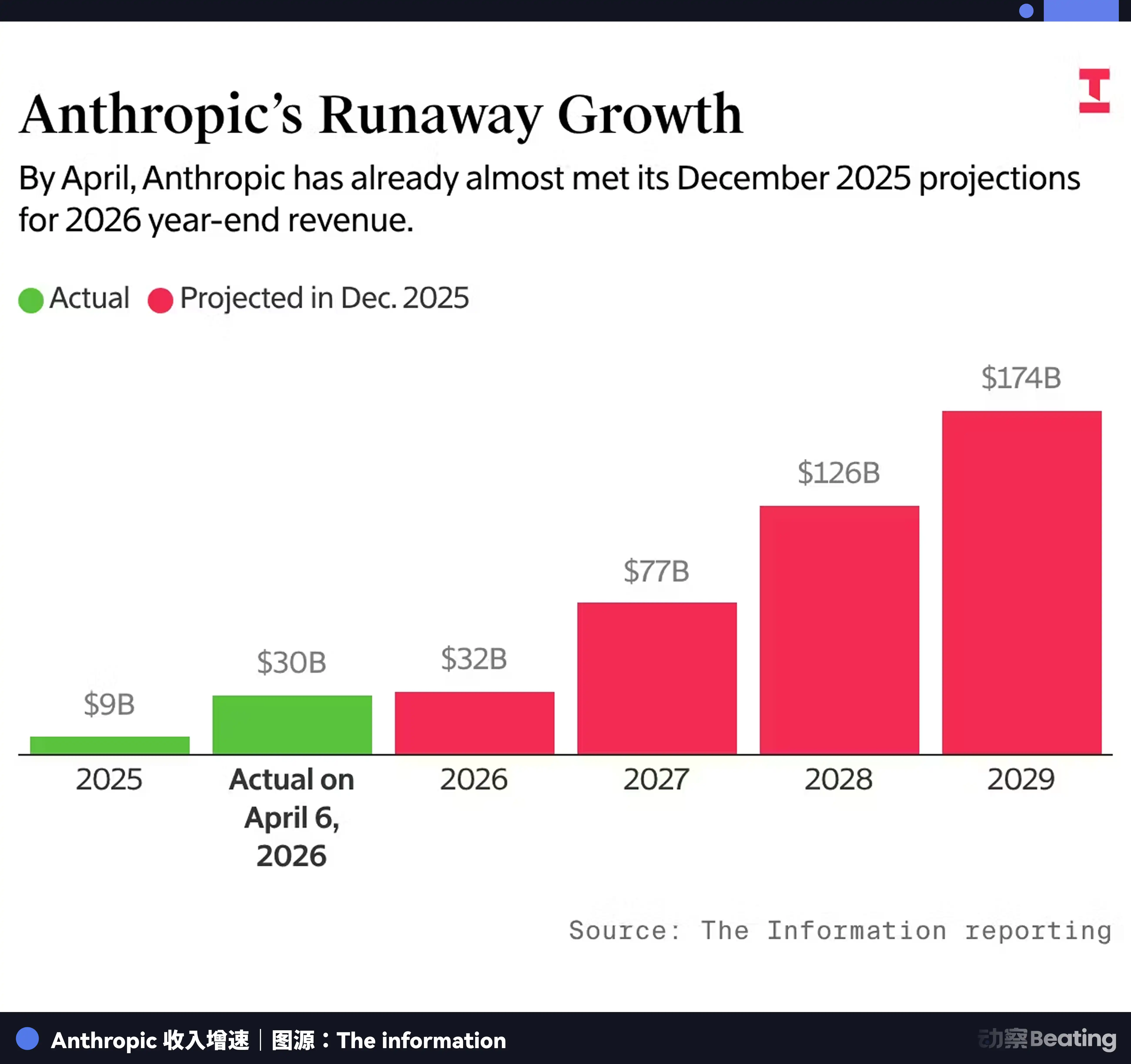

Anthropic's revenue is projected to be $9 billion at the end of 2025, and then soaring to $30 billion by March 2026, growing more than three times faster than OpenAI. If this momentum takes root in investors' minds, OpenAI's valuation of $850 billion faces a critical question: why are you worth twice as much while growing three times slower?

Thus, the sole purpose of Dresser's $8 billion accusation is to implant a subconscious idea in investors' minds—that Anthropic's numbers should not be taken at face value.

However, there is an ironic aspect to this attack: Dresser robustly promotes OpenAI's new partnership with Amazon in the same letter. If OpenAI were to extensively use the Bedrock distribution channel in the future, it would face the same gross versus net issue.

The whip used today to strike others may fall upon oneself tomorrow.

The accounting method dispute is merely the easiest part of this letter to quantify. More worth dissecting is Dresser's qualitative judgment on the essence of Anthropic's brand.

Fear or Responsibility

"Their narrative is built on fear, restrictions, and the idea that a few elites should control AI."

Most reports take this sentence as an insult, but it is not just an insult.

Dresser is re-evaluating. She attempts to make investors see Anthropic's safety brand premium as a manufactured scarcity, a differentiation that is packaged.

On April 7, Anthropic announced its most powerful model to date, Claude Mythos Preview, while simultaneously announcing that this model would not be publicly released. Instead, it introduced a restricted access program called Project Glasswing, allowing 12 key partners to use Mythos to scan and fix zero-day vulnerabilities in critical software.

Anthropic claims that Mythos has discovered thousands of high-risk vulnerabilities across every mainstream operating system and browser over the past few weeks, including a remote code execution vulnerability in FreeBSD that existed for 17 years.

A model so powerful it cannot be publicly released, restricted to the hands of a few elite institutions for safeguarding the world's cybersecurity, this is the pinnacle form of Anthropic's safety narrative.

Although some researchers point out that several security companies can replicate some vulnerabilities found by Mythos with older, cheaper public models. However, Anthropic packaged the connection between discovery and exploitation as a watershed moment, locking its product release rhythm on this basis.

In OpenAI's narrative framework, Glasswing is the perfect example of a few elites controlling AI. You have a powerful tool, you do not let most people use it, you claim it is for safety, but the objective effect is that only those you trust can gain this ability.

In Anthropic's narrative framework, Glasswing is the ultimate proof of responsible AI development. We are strong enough to discover vulnerabilities that others cannot, and restrained enough not to throw this ability directly into the market.

Both narratives serve their respective IPO valuation models.

Anthropic needs investors to believe that the safety brand premium is sustainable. OpenAI needs investors to believe that scale and computing power are the ultimate moat.

Who Fired the First Shot?

This is a seemingly simple yet ultimately unanswered question.

Because there is so much evidence, and each piece can be interpreted by both sides as the other's first move.

If you stand from Dario Amodei’s perspective, the starting point is the governance collapse within OpenAI. In 2017, he witnessed the ruthless layoffs pushed by Musk, and after Altman took over in 2018, he was promised to limit the powers of Brockman and Szulczewski, while simultaneously making contradictory promises to the latter two. In 2020, Altman accused the Amodei siblings of secretly delivering unfavorable feedback to the board, only to deny making such claims during confrontation.

These experiences shaped a deep distrust.

If you stand from Altman's perspective, the starting point is Dario's method of departure. At the end of 2020, Dario left with nearly ten core colleagues to establish Anthropic, in his resignation memo categorizing AI companies as market-oriented and public benefit-oriented. In this brand positioning declaration, he preemptively placed OpenAI into the morally lower category. Subsequently, every public statement from Anthropic, from billboards on the streets of San Francisco to Super Bowl ads, reinforced this classification.

The entanglement of grievances between the two individuals and their respective companies can be traced back to the establishment of OpenAI in 2015, with each step involving a defensible choice, cumulatively leading to an irreversible rupture.

Who struck first is not important; what's important is why this animosity cannot cease, and every conflict only self-reinforces.

The answer lies in brand structure.

Once Anthropic positions itself as the righteous party that left due to safety principles, OpenAI automatically becomes the problematic party that was left behind. Once OpenAI characterizes Anthropic as a fear-mongering elitist, every cautious move by Anthropic becomes evidence supporting this accusation.

The brands of both sides serve as opposites, meaning the existence of the other is the best advertising material for one's own brand.

Anthropic needs an unsafe rival to prove the necessity of a safety narrative. OpenAI requires a hypocritical rival to justify the legitimacy of an open narrative.

This is a Nash equilibrium; neither side has the incentive to stop the attacks because a ceasefire would damage their respective brands more than continuing to fight.

Every round of escalation in 2026 perfectly illustrates this structure.

In February, Anthropic aired four ads during the Super Bowl, opening with the words betrayal, deceit, betrayal of trust, and infringement plastered across the screen, mocking OpenAI's decision to insert ads into ChatGPT. The closing line of the ad was, "Advertising is entering AI, but it will not enter Claude."

Altman responded on X with a 420-word retort, first stating the ad was funny and made him laugh, then used terms like dishonest, Anthropic-style double talk, selling expensive products to the wealthy, etc.

NYU marketing professor Scott Galloway remarked that as a market leader, one should never mention the competitor's name; Hertz never mentions Avis, and Coca-Cola never mentions Pepsi. Altman’s response itself is a sign of weakness.

On February 19 at the AI summit in New Delhi, Modi invited the thirteen tech leaders on stage to pose for a group photo. Everyone did so, except for Altman and Dario, who stood next to each other, each raising a fist without any physical contact, and this image went viral on social media. Altman later claimed he did not know what was happening, and Dario made no public comments.

By the end of the same month, the Pentagon dispute erupted. Anthropic refused to delete two clauses from a contract and was subsequently entirely banned from use by federal agencies. Hours later, OpenAI announced a contract with the Pentagon. A judge ruled in March that the Pentagon's actions constituted unconstitutional retaliation, and Claude briefly shot to number one on the App Store.

In the first week of April, Anthropic released Mythos and Glasswing. In the second week of April, two memos from OpenAI leaked one after another. Every round of attack occurred at critical junctures of fundraising closures, product releases, or IPO preparations.

So, in this back-and-forth, who is reaping the benefits?

The Winner Is Not in the Ring

Dresser ends the letter with a seemingly mild statement: "Customers will benefit from the competition."

But on the eve of the IPO, the customers are not the winners in this verbal war; the investors are. Beyond investors, there is a quieter group of beneficiaries.

Amazon is the biggest winner.

Dresser explicitly stated in the letter that Microsoft's partnership limits OpenAI's ability to access customers, while she praised the demand for the Amazon Bedrock channel. This means OpenAI is positioning Amazon as its second marriage, but Anthropic's first marriage is also built on AWS.

Amazon invested $50 billion in OpenAI and is also one of Anthropic's largest cloud partners. Regardless of which company wins, Amazon stays at the poker table.

Even more intriguingly, the core evidence of Dresser's accusation against Anthropic's inflated revenue happens to involve Amazon itself. If this accounting dispute is ultimately arbitrated by the SEC during the IPO review, Amazon, as another party in the transaction, will hold the most critical facts. It serves as the evidence in the hands of the referee, as well as the sponsor for both players.

The second winner is Google.

As OpenAI and Anthropic use each other as references to define themselves, Google is not part of this binary narrative framework.

Gemini 3 surpassed ChatGPT 5.1 in benchmarks by the end of 2025, but due to media and investors' attention being drawn to the dramatic conflict between OpenAI and Anthropic, Google gained narrative freedom, allowing it to quietly advance its products without needing to ascribe moral significance—whether safe or open—to every action.

The third winner is the regulatory policymakers.

OpenAI co-founder Greg Brockman personally donated $25 million to the pro-Trump MAGA committee, while also raising over $125 million through a political action committee launched with a16z to support federal unified regulation and oppose independent state legislation. Anthropic donated $20 million to Public First Action, which advocates for stronger AI regulation.

Both companies use political donations to buy their preferred regulatory environments.

Anthropic even hired Ballard Partners, a lobbying firm closely tied to the Trump administration, after the Pentagon dispute, turning around to buy a ticket to the White House on the premise of rejecting military contracts for safety principles.

The more heated the verbal conflict, the higher the lobbying demands for both companies in Washington, and the stronger the bargaining power of the regulatory policymakers.

The last winner is the IPO underwriters.

The conflict between the two companies helps investors avoid the trouble of understanding technical differences. Do you believe safety is a priority? Buy Anthropic. Do you believe scale is a priority? Buy OpenAI.

For sellers, this is the most effortless sales pitch. You do not need to make investors understand complex technical differences; you just need to ask them one question: which side are you on?

A commercial competition encoded as a family feud no longer requires data to persuade investors; it needs a position.

All these winners share one point in common: their interests do not depend on which company wins, but on the continuation of this battle. The more they fight each other, the higher the channel value for Amazon, the heavier the chips for regulators, and the easier the stories for underwriters to sell.

And the two parties involved also seem unwilling to stop, because if they do, their valuation stories lose the most dramatic tension.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。