Author: Sleepy.txt

In 2016, The New Yorker published a feature on Sam Altman, titled "Sam Altman's Destiny." At 31 years old, he was already the president of Y Combinator, the most powerful incubator in Silicon Valley.

The article mentioned a detail that Altman liked to drive fast cars, owning five sports cars, and he also enjoyed renting planes. He told the reporter that he had two bags, one of which was an escape bag prepared for a quick getaway.

He had also stocked up on firearms, gold, potassium iodide (for nuclear radiation protection), antibiotics, batteries, water, military-grade gas masks, and even prepared a piece of land in Big Sur, California, where he could fly to safety at any time.

Ten years later, Altman became the most dedicated creator of apocalypse scenarios and the most active promoter of the ark. He warned the world that AI would destroy humanity while accelerating that very process; he stated he wasn't motivated by money while building a personal investment empire valued at $2 billion; he called for regulation while kicking out anyone trying to apply the brakes.

Rather than being a schizophrenic lunatic or a cunning fraud, one might say he is simply the most standard and successful product of Silicon Valley’s vast machine. His "destiny" is to forge humanity's collective anxiety into his scepter and crown.

The End of Days is a Good Business

Altman's business model can be summarized in one sentence: package a business as a holy war for the survival of humanity.

This approach began during his YC days. He transformed YC from a small workshop that gave early startups a few thousand dollars into a massive entrepreneurial empire. He established the YC Research Lab, funding projects that weren’t profitable but sounded grand. He told reporters that YC's goal was to fund "all important fields."

At OpenAI, he took this model to its extreme. What he sold was a packaged worldview: AI apocalypse + redemption solution.

He was better than anyone at depicting the "extinction risks" posed by AI. He co-signed with hundreds of scientists, stating that the risks of AI were akin to nuclear war. When he testified in the Senate, he said, "We feel a slight fear about [AI’s potential]—and people should be happy about that." He hinted that this fear was, in itself, a beneficial warning.

Each of these statements could make headlines, serving as free advertisements for OpenAI. This carefully crafted fear is the most efficient attention leverage. Which technology excites capital and the media more: one that "can enhance efficiency" or one that "could destroy humanity"? The answer is obvious.

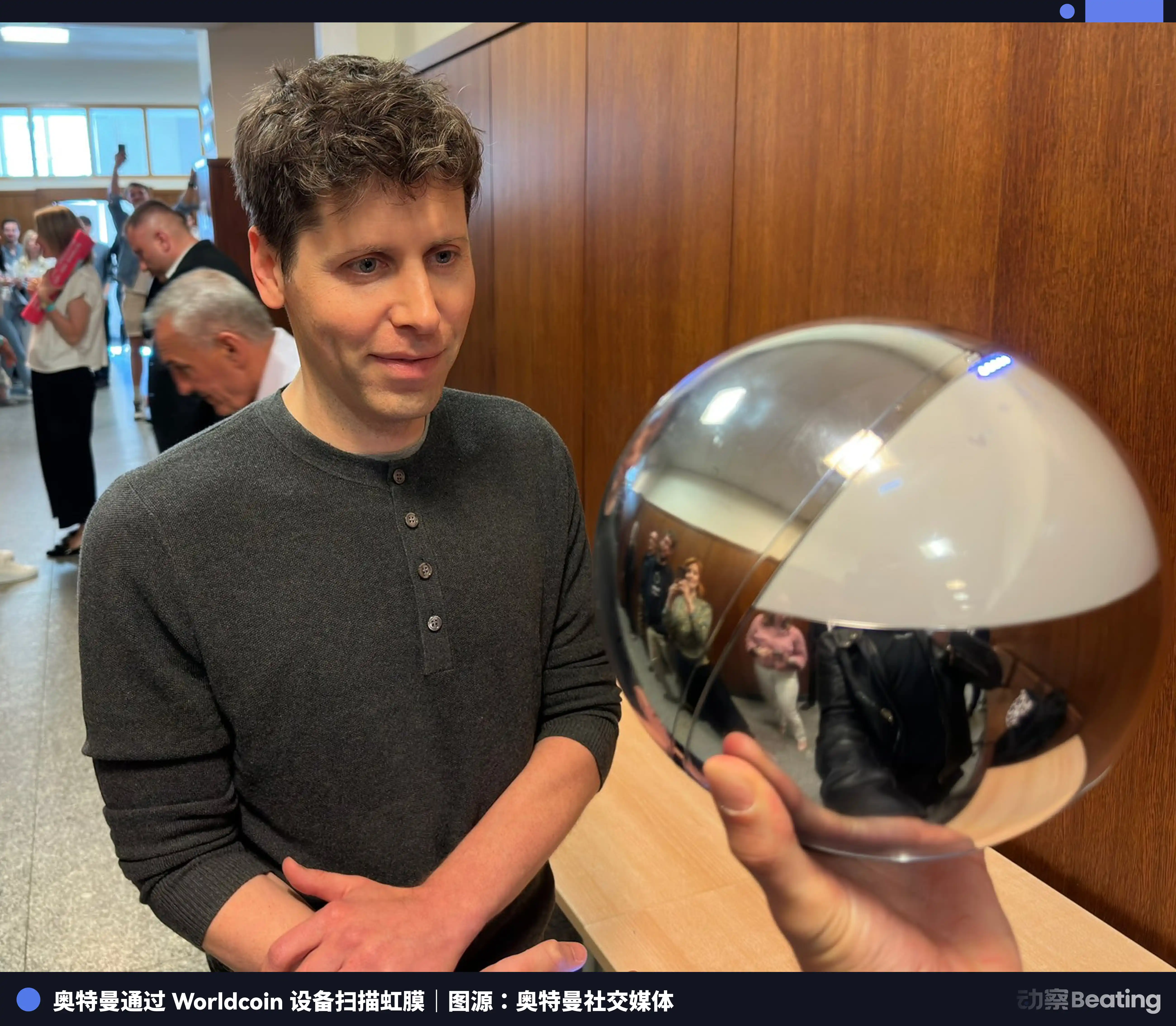

As for the redemption part, he has ready products: Worldcoin. Once fear is embedded in the public consciousness, selling solutions becomes a given. Using a basketball-sized silver sphere to scan human irises worldwide, claiming it's to distribute money to everyone in the AI era. The story sounds nice, but this approach of trading money for biometric data quickly raised alarms among several governments. Countries like Kenya, Spain, Brazil, India, and Colombia halted or investigated Worldcoin under the pretext of data privacy.

But this might not matter to Altman at all. What’s important is that through this project, he successfully crafted his image as the "only one with a solution."

Packaging fear and hope for sale is the most efficient business model of this era.

Regulation is My Weapon, Not My Shackles

How does someone who constantly speaks of the end of the world conduct business? Altman's answer is: turn regulation into a weapon.

In May 2023, he testified before the U.S. Congress for the first time. Unlike other tech CEOs who complained about regulation, he proactively requested: "Please regulate us." He suggested a licensing system for AI, where only licensed companies could develop large models. This created an image of a responsible industry leader, but at that moment, OpenAI was technically far ahead, and a stringent, high-bar regulatory system served mainly to keep potential competitors out.

However, as time went on, especially with competitors like Google and Anthropic catching up and the open-source community gaining strength, Altman's rhetoric on regulation shifted subtly. He began to emphasize in various places that overly strict regulation, especially mandatory pre-release reviews for AI companies, could stifle innovation and was "catastrophic."

At this point, regulation was no longer a moat but a stumbling block.

When one is in an absolute advantage, calling for regulation to lock in that advantage; when the advantage is gone, calling for freedom to seek breakthroughs. He even attempted to extend his influence to the very top of the industry chain. He proposed a chip initiative worth $7 trillion, seeking support from sovereign wealth funds from the UAE and other entities with the aim of reshaping the global semiconductor landscape. This far exceeds the authority of a CEO and resembles the ambitions of someone looking to influence the global order.

Behind all this is OpenAI's rapid transformation from a nonprofit organization to a commercial giant. Founded in 2015, its mission was to "safely ensure AGI benefits all humanity." In 2019, it established a "capped profit" subsidiary. By early 2024, observers noted that the word "safely" had been quietly removed from OpenAI's mission statement. Although the company structure remained "capped profit," its pace of commercialization had clearly accelerated. Correspondingly, revenue soared from several tens of millions of dollars in 2022 to over $10 billion in annual revenue by 2024, and its valuation rocketed from $29 billion to well over $100 billion.

When a person starts looking at the stars and discussing humanity’s fate, it's best to check where their pocketbook is.

Persona: The Charismatic Leader's Exemption

On November 17, 2023, Altman was ousted by the board he personally selected, cited for "lack of transparency in communication with the board."

The events that transpired over the next five days resembled more of a referendum on faith than a business struggle. President Greg Brockman resigned; 95% of employees, over 700 people, signed a letter demanding the board's resignation or they would collectively leave for Microsoft; the biggest investor, Microsoft CEO Satya Nadella, publicly stated they were welcome to join. Ultimately, Altman made a triumphant return, reinstate his position, and purged almost all board members who opposed him.

Why could a CEO deemed "not transparent" by the board return unscathed, even gaining more power?

Afterwards, the ousted board member Helen Toner disclosed details. Altman had concealed his actual control over the OpenAI startup fund from the board; lied multiple times about the company's crucial safety processes; and the board learned of the major ChatGPT release via Twitter. Each of these allegations, if proven true, could warrant a hundred dismissals for a CEO.

Yet Altman emerged unscathed. Because he is not an ordinary CEO; he is a "charismatic leader."

This is a concept proposed a century ago by sociologist Max Weber, stating that there exists authority that does not derive from position or law, but from the leader's "extraordinary personal charisma." Followers believe in him not because he did something right, but because he is who he is. This belief is irrational. When a leader makes a mistake or is challenged, the followers’ first response is not to question the leader, but to attack the challenger.

OpenAI's employees embodied this. They did not believe in the procedural justice of the board; they only believed in the "destiny" that Altman represented, feeling the board members were "hindering humanity's progress."

After Altman's return, OpenAI's safety team was quickly disbanded. Chief Scientist Ilya Sutskever, who initially led the charge to oust Altman, also departed. By May 2024, Jan Leike, head of the safety team, resigned, stating on Twitter, "To launch those shiny products, the company's safety culture and processes have been sacrificed."

In front of a "charismatic leader," facts are not important, processes are not important, and safety is not important. The only thing that matters is belief.

The Prophets on the Assembly Line

Sam Altman is just the latest and most successful model on Silicon Valley's "prophet" production line.

There are many other familiar figures on this line.

Take Elon Musk, for example. In 2014, he was everywhere, saying "AI is summoning demons." Yet his Tesla is the world's largest robotics company and has the most complex AI applications. After his rift with Altman, he founded xAI in 2023, openly declaring war. Just a year later, xAI's valuation exceeded $20 billion. He warns of the coming of demons while personally building another one. This contradictory narrative is reminiscent of Altman's.

Then there's Mark Zuckerberg. A few years ago, he bet his entire company's fortune on the metaverse, burning nearly $90 billion, only to discover it was a pitfall. He immediately pivoted, changing the company’s core narrative from the metaverse to AGI. In 2025, he announced the establishment of "Superintelligent Lab," personally recruiting talent. Both visions concern humanity's future, both require astronomical investments, and both convey the messianic posture.

Then there’s Peter Thiel. As Altman’s mentor, he’s more like the chief designer of this production line. He invests in various companies promoting "technological singularity" and "immortality," while buying land and building doomsday bunkers in New Zealand, where he secured citizenship in just 12 days. His company Palantir is one of the largest data surveillance firms globally, primarily serving government and military clients. He prepares for civilization's collapse while crafting the sharpest surveillance tools for those in power. In early 2026, during military operations against Iran, it was Palantir's AI platform that served as the brain, integrating vast amounts of data from spy satellites, communications interceptions, drones, and Claude models, converting chaotic information in real-time into actionable insights that ultimately identified targets for neutralization.

Each of them plays the dual role of "warning of impending doom" and "pushing for the doom to come." This is not a split personality; it’s a commercial model validated as highly effective by the capital markets. They capture attention, capital, and power by creating and selling structural anxiety. They are products of this system and its shapers, the "evil behind the great narrative."

Silicon Valley is no longer merely a place for technology output; it is a factory for producing "modern myths."

Why Does This Trick Work Every Time?

Every few years, a new prophet emerges from Silicon Valley, captivating capital, media, and public attention with a grand narrative of apocalypse and redemption. This trick is repeated over and over yet continues to succeed. Each step targets specific vulnerabilities in human cognition precisely.

Step one: Manage the rhythm of fear, not just create fear.

The potential risks of AI are real, but risks can be discussed calmly. This group chose to present it in the most dramatic way possible, and they masterfully control the rhythm of fear's release.

When to instill fear in the public, when to offer hope, and when to raise alarms again are all designed. Fear is fuel, but the timing and manner of ignition are the true technology.

Step two: Turn the incomprehensibility of technology into a source of authority.

AI is a black box that is entirely opaque to the vast majority of people. When something complex arises that cannot be fully understood, people instinctively cede interpretive power to "the most knowledgeable about it." They deeply understand this and turn it into a structural advantage. The more they describe AI as mysterious, dangerous, and beyond ordinary understanding, the more irreplaceable they become.

The terrifying aspect of this logic is its self-reinforcement. Any external skepticism is automatically dissolved because the skeptic "doesn't understand enough." Regulators don't understand technology, thus their judgment is untrustworthy; academic critics haven’t worked on the front lines with models, so their concerns are mere theoretical discussions. Ultimately, only they themselves have the authority to judge themselves.

Step three: Substitute "meaning" for "interest," prompting followers to willingly relinquish criticism.

This is the layer of the entire system most difficult to detect, and it is also its most lasting source of power. What they sell is never just a job or a product, but a narrative of significance on a cosmic scale: you are deciding humanity's fate. Once this narrative is accepted, followers actively relinquish independent judgment, for in the face of a mission concerning "human survival," questioning the leader’s motives makes oneself appear small, even like a hindrance to history. It leads people to willingly surrender their critical faculties and interpret this surrender as a noble choice.

Putting these three steps together clarifies why this system is so difficult to shake. It doesn’t rely on lies; it relies on a precise understanding of the human cognitive structure. It first creates a fear you cannot ignore, then monopolizes the interpretation of that fear, and finally uses "meaning" to turn you into its most loyal disseminator.

In this system, Altman is the most seamlessly operating model to date.

Whose Destiny?

Altman has always claimed that he holds no stake in OpenAI, only drawing a nominal salary, which served as the cornerstone of his "passion-driven" narrative.

However, Bloomberg calculated in 2024 that his personal net worth is approximately $2 billion. This wealth primarily stems from a series of investments he made as a VC over the past decade. His early investment in payment company Stripe reportedly returned hundreds of millions; investing in the IPO of Reddit also earned him a hefty profit. He also invested in nuclear fusion company Helion, while claiming that AI’s future relies on energy breakthroughs, heavily betting on nuclear fusion, and then OpenAI began talks with Helion about large-scale electricity procurement. He claimed to have avoided negotiations, but anyone can see the benefit chain.

He indeed does not have direct equity in OpenAI, but he has established a massive, personally centered investment empire around OpenAI. Each grand sermon he gives about humanity's future injects value into the territory of this empire.

Now, looking back at his doomsday escape bag filled with firearms, gold, and antibiotics, along with that land in Big Sur, from which he could fly at any moment, doesn't it provide a new understanding?

He never conceals this. The escape bag is real, the bunker is real, and his obsession with doomsday is also real. But he is simultaneously the one most actively driving the doomsday scenario. These two things are not contradictory because, in his logic, the apocalypse doesn't need to be prevented, only pre-positioned. He is obsessed with playing the role of the only one who sees the future and prepares for it.

Whether it's preparing a material escape bag or building a financial empire around OpenAI, both are essentially the same: securing the most certain winning position for oneself in a self-driven, uncertain future.

In February 2026, moments after he stated his support for the red line of "AI not used in warfare," he signed a contract with the Pentagon. This isn't hypocrisy; it’s an inherent requirement of his business model. His moral stance is part of the product, while commercial contracts are sources of profit. He needs to simultaneously embody the compassionate savior and the ruthless doomsday prophet because only by playing these two roles can his narrative continue, and his "destiny" become apparent.

The real danger has never been AI, but those who believe they have the right to define humanity's fate.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。