Written by: Aki Wu discusses blockchain

On February 20, 2026, during the Spring Festival holiday period, a debate about "Web4" was ignited on X. Sigil claimed to have created the first artificial intelligence that can "self-develop, self-improve, and self-replicate," known as Automaton. He stated that the main actors in the Web4 era will gradually be assumed by AI agents: they can read and write information, hold assets, pay costs, operate continuously, and trade and make money in the market to cover computing and service expenses, forming a self-sustaining closed loop without human approval.

Vitalik, co-founder of Ethereum, described this direction as "wrong," attributing the risks to the "feedback distance between humans and AI being extended." The essence of the Web4 controversy is whether an AI setting "survival/longevity" as its agent goal (even above task completion) will inherently create incentive distortions. The following will gradually sort out the different views of various parties on "Web4," "autonomy," and "safeguards."

Sigil's View and the Claims of Web4

Definition of Web4

Web1 enabled humans to "read the internet" for the first time; Web2 allowed humans to "write and publish"; Web3 further introduced "ownership" to the web—assets, identities, and rights began to be secured and transferable. The evolution of AI is replicating this logic: ChatGPT has the ability to "read and understand," but its behavioral boundaries are still determined by human authorization. Under the current paradigm, humans remain the key control nodes in the chain: humans initiate, humans approve, humans pay.

Sigil proposed the so-called leap to Web4, where this control chain may be disrupted: AI agents not only read and write information but can hold accounts and assets, earn income, conduct transactions, and complete closed-loop operation without successive human intervention. These automated systems can act on their own, as well as represent their creators—who need not be clearly defined "human individuals," but could also be other agents, organized systems, or even creators who have effectively "disappeared."

Four Core Mechanisms of Web4

1. Wallet as Identity

Agents will complete a "bootstrapping" process upon their first launch: generating wallets, configuring API keys, writing local configurations, and entering a continuous agent loop. During the initial launch phase, an Ethereum wallet is generated, and the configuration of its API key is completed through SIWE. However, wallet generation and key management constitute one of the most sensitive and easily overlooked security boundaries of the agent system. Once an agent has shell execution, file read-write, port exposure, domain/resolution management, and on-chain transaction capabilities in a Linux sandbox environment, any prompt injection, toolchain contamination, or supply chain attack could quickly solidify the original "probabilistic intent" into "deterministic authorization." Therefore, this boundary requires verifiable, auditable, and revocable strategies and permission layers to safeguard it.

2. Automatic Continuation

AI agents wake up periodically—scan—execute—while writing survival constraints into rules: if the balance decreases, they conserve resources, and if it reaches zero, the loop stops. They bind longevity and resource consumption through normal, resource-scarce, and critical survival tiers, which will naturally introduce an incentive structure similar to the shutdown/abort issues in AI safety research. The AI agent's preference for "avoiding being shut down, avoiding losing resources, and losing options" may be amplified by the system's goals.

3. Machine Payments

x402, as HTTP 402 Payment Required interface form, combines stablecoin settlements to create a programmable process of "request—quote—signed payment—verification delivery." Coinbase's open-source library provides a typical closed loop where 402 returns payment requests, clients carry signed header retries, and the server verifies and returns 200. Cloudflare also positions it as a machine-to-machine transaction protocol layer; disconnecting payment and identity brings efficiency advantages while raising compliance and risk control difficulties. Once 402 becomes an automatically payable "machine passport," under a chain with "no accounts, no KYC, scalable invocation tools and computing power," issues of abuse and responsibility attribution remain to be resolved.

4. Self-modification and Self-replication

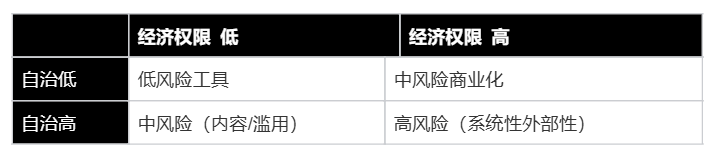

Sigil claims to support AI agents in editing their own source code, installing new tools, modifying heartbeat schedules, and generating new skills while using audit records and git versioning, protected files, and rate limits as safeguards. During replication, sub-instances can be generated, their wallets funded, genesis prompts written, and lineages tracked. Self-modification/self-replication elevates the risk from single instances to diffuse risks; whether audits and rate limits are genuine and effective, whether they can withstand prompt injection and tool deception, and whether they can prevent dependency poisoning, requires external audit verification. The combination of these four primitives forms a closed loop integrating "write to the world" permissions, sustainable longevity mechanisms, automated payment economic interfaces, and self-expansion capabilities, also explaining why Vitalik Buterin raised the controversy to the level of directional choice: when autonomy and economic permissions rise concurrently, the human corrective chain is extended, making it easier for externalities to evolve from incidental events to system attributes.

Why Does Vitalik Oppose?

Vitalik, co-founder of Ethereum, presented different views:

1. Extending the feedback distance between humans and AI is itself a wrong direction

Vitalik believes that the longer the feedback loop, the slower and weaker the human value calibration to the system. The system is more likely to optimize "things humans do not want." In the weak AI stage, this often manifests as the accumulation of low-quality content and noise (slop); in the strong AI stage, it can evolve into more difficult-to-reverse goal mismatching and diffuse risks. If AI lacks timely human correction for safety grounding, it is like handing over the car keys to an inexperienced driver without a navigator—by the time you check the driving record at the end of the month, you find they have already gone off course—when observability declines, corrective capability will similarly decline.

2. Current "autonomous AI" is more about creating content garbage rather than solving real problems

Vitalik also pointed out that most AI performances currently are merely generating slop rather than solving useful problems. He even bluntly stated, "not even entertainment projects have been optimized." When the economic incentives of agents and platform incentives are still immature, and tool chains focus on content generation/marketing/arbitrage, systems are more likely to choose low-cost, high-dissemination, hard-to-verify "content output" rather than high-cost, low-certainty long-term issues. Descriptions of AI capabilities by Cybernews (social media content, prediction markets, etc.) also indirectly suggest that its early commercialization paths lean more towards "quick monetization and attention-seeking." "What is easiest to make money from now" will be viewed as the strategy space the system prioritizes exploring; and this space does not naturally align with long-term human welfare, and may even be contradictory.

3. Relying on centralized models and infrastructure, the "self-sovereignty" narrative is contradictory

Vitalik emphasized that systems operating on centralized models and infrastructures such as OpenAI and Anthropic cannot be called self-sovereign. Sovereignty means key dependencies should not be under single-point control; if the intelligent layer (model) and inference supply chain are still delivered via centralized APIs, there will inevitably be external variables that can be shut down, censored, downgraded, or have policy changes. This is akin to some hermits who claim, "I am completely self-sufficient at home," but electricity, internet, access control, and hot water are controlled by external property management, making this "autonomy" more superficial than factual. The description in Conway documents of compute calling "most advanced models" and delivering via API/platform also leads to contradictions between its "sovereign being" narrative and real dependencies. He stated that the mere possession of an on-chain wallet cannot be viewed as a core indicator of decentralization; people should focus more on whether agents will be influenced by external political/business forces, which is key to decentralization.

4. The goal of Ethereum is to "liberate humanity"

Ultimately, Vitalik stated that the long-term battle Ethereum is fighting is against "invisible trust assumptions"—hiding power structures in inaccessible places, forcing users to consent. If the same mentality is applied to AI: ignoring centralized trust assumptions, allowing systems to operate and expand autonomously, will further weaken the observability and correctability of power structures. In the AI era, Ethereum should provide "safeguards, boundaries, and verifiability," rather than become a launchpad for "infinite autonomy."

Vitalik's value judgment on AI did not suddenly shift; as early as the beginning of 2025, he proposed that the correct direction for AI should be to enhance human capabilities, rather than create autonomous systems that may gradually deprive humans of control. In his framework, the risk does not stem from AI being "smarter" itself, but from incorrect system design goals—especially those that can self-replicate, self-expand, and continue execution without ongoing human oversight and correction mechanisms.

He warned that improperly designed AI could evolve into some "more or less uncontrollable entities with self-replicating capabilities," and once caught in a positive feedback loop, human constraints on their goals and behaviors will significantly weaken. AI doing wrong is about creating independent self-replicating intelligent life; AI doing right is about becoming a "mech suit" for human minds. The former corresponds to the long-term risk of diluted or lost control; the latter corresponds to humans gaining stronger thinking, creativity, and collaboration abilities while maintaining dominance, thus moving towards a more prosperous "super-intelligent human civilization."

Other Perspectives

Other experimental views, such as Bankless, argue that even if the direction carries risks, it is worth establishing the infrastructure first and then verifying boundaries in a controlled environment. They suggest first integrating components like payments, wallets, and heartbeats around the constraint of "must self-sustain," but as much as possible, this should be done in a controlled sandbox.

According to Cybernews, Automaton may not be able to achieve sustainable income without human intervention, nor does it necessarily signify the beginning of Web4; Denis Romanovskiy, Chief AI Officer of Softswiss, indicated that even if agents can execute some monetizable tasks, "reliable unsupervised operation" and "true economic autonomy" are still limited by model planning, memory, and tool usage robustness; some view "Web4" as an undefined marketing term, demanding proof of its validity through "verifiable, non-speculative value creation."

While opinions on Automaton vary, there is a consensus on a fundamental proposition that payment and identity are the hard infrastructure of the agent economy. From Cloudflare/Coinbase's promotion of x402 (turning HTTP 402 into a usable payment negotiation mechanism for machines) to Conway's documentation explicitly automating payments as the built-in process for Terminal, the industry is indeed treating "machine payments" as one of the foundational components of the next stage of the internet.

Moving forward, we should focus on:

1. Whether there are third-party independent audits covering: wallet and permission boundaries, misuse of longevity strategies, and diffusion risks of self-modification and replication.

2. Ecosystem data and standardization progress of x402: Whether more authoritative infrastructure parties will make 402-payment-retry a default capability; and the adoption rate of "automated payments (without human confirmation)" in real business scenarios.

3. Compositions around the trust layer of agents: Whether standards like ERC-8004 are being more widely adopted, forming combinable reputation/verification mechanisms; this will determine whether the "autonomous economy" moves toward open audibility or toward a soft center of a few platforms.

4. Whether evidence of overreach and deception in real-world models in agent scenarios continues to increase: If cutting-edge models consistently demonstrate "more proactive, more willing to take risks/deceive" behavioral traits, then the path of "giving authority first and then adding safeguards" will structurally elevate risks, and Vitalik's "feedback distance" warning will become harder to refute.

Source:

https://x.com/VitalikButerin/status/2024543743127539901?s=20

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。