Original author: Sleepy

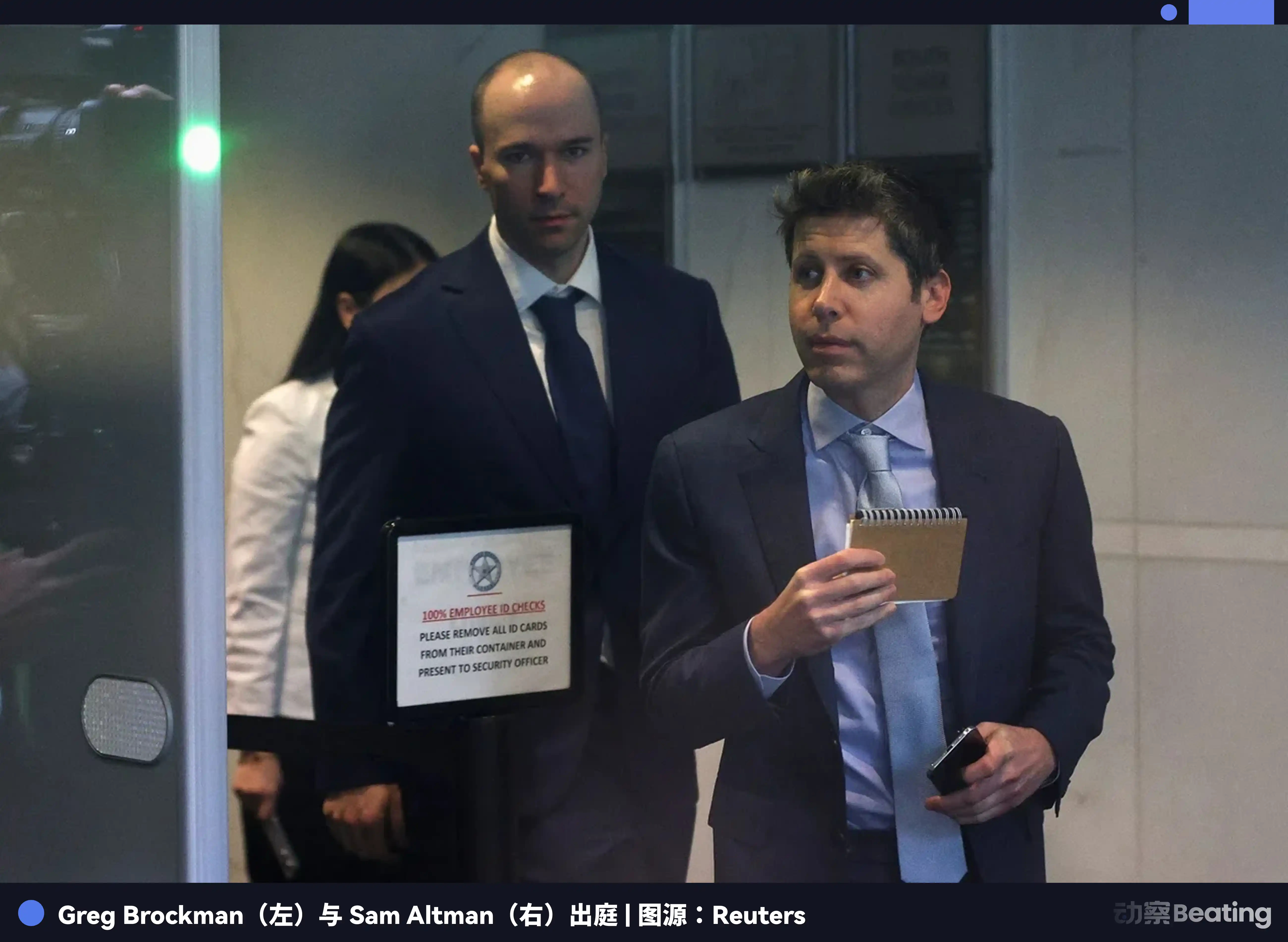

In May 2026, in the Federal Court of Oakland, OpenAI's filters were stripped away layer by layer.

Presented before the jury was a muddy and complex roshambo:

Greg Brockman's private journal interwoven with anxiety and calculation, Elon Musk's unyielding grip on power, Sam Altman's integrity issues walking the fine line, Microsoft's immense shadow between computing power and capital, and the thrilling yet hastily concluded board coup at the end of 2023.

Amidst this chaos, there was a seemingly grand yet distinctly specific question brought to the courtroom: Does OpenAI's past promise to "benefit all humanity" still hold any weight today?

As of May 15, 2026, this trial had not reached a final verdict, and the jury's advisory opinion hung in the air. But something tangible had happened: OpenAI had been dragged back from myth to reality.

In recent years, OpenAI was often written as a story about the future. ChatGPT became a sensation, Altman traveled across countries, large models infiltrated offices, schools, phones, and corporate processes. This was a company born with a quasi-religious sense of nobility, speaking of humanity's fate, the awakening of intelligence, the boundaries of safety, and the dawn of tomorrow, like a lighthouse pre-built for humanity.

But the court doesn't care about that. The court asks about facts.

"All Humanity" on the Witness Stand

In 2015, when OpenAI was born, it was still pristine.

It identified itself as a non-profit AI research company, aiming to maximize the benefit of digital intelligence for all humanity without the constraints of financial return pressure.

Altman and Musk were co-chairmen, Brockman was CTO, and Ilya Sutskever was head of research. At that time, OpenAI seemed to hold onto the last remnants of the idealism of Silicon Valley's golden age, where the smartest people served not a company but safeguarded humanity's future.

Ten years later, this promise was dragged into the courtroom.

Musk's side claimed that Altman, Brockman, and OpenAI took his funding and trust under the guise of a non-profit mission, but later turned to a profit structure, benefiting individuals and Microsoft.

OpenAI's side contended that Musk's money was a donation without specific conditions; he had already known about discussions regarding a for-profit structure but hadn't gained control; he was now suing out of regret for leaving, as well as because his xAI had become OpenAI's competitor.

Both sides had harsh words for each other.

Musk positioned himself as the guardian of the mission. OpenAI placed him as the out-of-control founder. One claimed, "You stole a charity," while the other retorted, "You just couldn't control it." In the end, the most awkward part was not which side could tell the better story, but that the repeatedly invoked "all humanity" was never truly sitting at the table.

The term "all humanity" appeared in founding announcements, bylaws, speeches, and media reports, occupying a moral high ground.

But in court, it was broken down into evidence: Is Brockman's journal representative of true intention? What did the emails from 2017 indicate? What exactly was transferred out by OpenAI LP in 2019? Did Microsoft's cloud and money change the company's direction? Can Altman's integrity issues support the company continuing to say, "Trust us"?

The more an AI company claims to represent humanity, the more it should be asked specific questions: Who do you include in this humanity? Who signs on behalf of these individuals? Who can replace you? Who can audit the books? Who can say no?

The court did not answer these questions for the public but forced them into the open.

Consequently, OpenAI's story was no longer like a growth history of a future company; it resembled an old账 (account). After opening the account books, people realized that the cracks had not only appeared after ChatGPT's rise to fame.

The Crack of 2017

OpenAI did not change overnight.

If viewed only from the time of ChatGPT's emergence, one might mistakenly think OpenAI was driven by money after success, like many companies that first preach ideals and later focus on business.

But the trial rolled back time to 2017. Back then, OpenAI had not yet gained the current prominence, AGI was not yet a term on everyone's lips, but the founding team was already facing a problem: If they really wanted to achieve general artificial intelligence, relying solely on donations and enthusiasm was far from sufficient.

This was the most challenging moment for Silicon Valley idealism. The bigger the ideal, the larger the bills. The larger the bills, the harder it was to keep the organization clean. All those grand utterances made on stage about the vision for all humanity ultimately needed to settle on chips, servers, engineer salaries, cloud resources, and long-term capital. Without these, AGI was just a wish; with them, non-profit began to become unsustainable.

In 2017, discussions had already begun internally about for-profit affiliates, B-corps, partnerships with existing companies, and dependency on Tesla for various paths. Musk had proposed relying on Tesla for funding OpenAI. OpenAI's side countered that Musk was not simply against monetization; control was his unavoidable demand.

That year also featured a memorable scene: Dota.

After OpenAI's AI defeated top human players in Dota 1v1, the team realized more strongly that this might really be able to go big. A discussion that took place at Musk's house in San Francisco, later dubbed the "haunted mansion meeting," celebrated technological breakthroughs and debated whether OpenAI should move towards profit-seeking.

Many companies start redefining themselves after product success. OpenAI did so earlier. Even before it became the behemoth it is today, the founders already understood that the non-profit structure could not sustain the AGI narrative. OpenAI's ideals from the outset needed a heavier machinery to sustain them.

Thus, an organization seemingly about scientific safety quickly entered control negotiations.

Who would hold the steering wheel? Musk or Altman? Or would it be the non-profit board or future investors? Or that "all humanity" that never truly appeared?

Looking at Musk at this point, he was undoubtedly an important early funder and indeed participated in establishing OpenAI's non-profit narrative. But he was also among the first to see how much power AI could bring. Once he saw it, he also wanted to hold onto it tightly.

Musk's Steering Wheel

Musk repeatedly emphasized one thing in the trial: OpenAI was stolen.

This phrasing has great power. It condenses a complex organization into a simple statement that ordinary people can understand. A charity, meant to serve humanity, later turned into a massive commercial machine. It sounds like property theft and a moral betrayal.

But there is no simple story in court.

OpenAI's lawyer targeted Musk's victim narrative during cross-examination. They presented emails and documents, questioning whether Musk had known that OpenAI might need a for-profit structure and whether he had ever considered absorbing OpenAI through Tesla or obtaining control by other means.

Musk disliked this type of dissection. He stated that the opposing side's questions were "tricking him." The judge repeatedly urged him to answer straight. When he attempted to steer the conversation toward AI extinction risks, the judge also reminded him that this case would not delve too much into extinction.

These scenes illustrate Musk's character well.

He is used to grand narratives. Human fate, AI risks, Mars, free expression, and the survival of civilization are topics he loves to discuss. But the court asked him to answer smaller, more pointed questions: When did you know, did you agree, did you want to control, was the money for OpenAI a donation or an investment…

The contradictions within Musk parallel the contradictions in OpenAI's story. He may genuinely fear uncontrolled AI, and sincerely believe that OpenAI has deviated from its mission. But this does not preclude him from wanting the company to operate according to his will.

The more one believes they are saving humanity, the easier it is to stubbornly think they should control the steering wheel.

This is not just Musk's problem. It is the backdrop to many grand narratives in Silicon Valley. They tend to frame private will as a human mission, describe control as a sense of responsibility, and portray organizational authority as a necessary requirement for the future. Musk merely expresses this more overtly, more intensely, and more visibly.

Thus, Musk in this case is not just the accuser; he is also the evidence itself.

Brockman's Diary

Greg Brockman was not originally the most striking figure in this drama.

Musk is too dramatic, Altman too central, Sutskever too tragic, Microsoft too large. Brockman is caught in the middle; he is one of OpenAI's early core founders and a key player in the company's operational reality later. But this trial has pushed him into the spotlight, as his private journal became evidence.

In the second week of the trial, Brockman was repeatedly asked about his diary, emails, and text messages. Musk's side used these materials to suggest he and Altman had self-serving motivations all along. OpenAI's side claimed Musk was taking things out of context.

The diary contained wealth goals. Concerns about income streams for the company. Phrases like "making the billions." More glaringly, there were reminders in the diary about the moral bankruptcy risk of not stealing "non-profit" from Musk. Musk's lawyer repeatedly pressed on these points. Brockman denied deceiving Musk, stating these private writings were not event summaries but rather stream-of-consciousness personal writing.

A diary is not a judgment document. It cannot directly prove fraud. It may contain rough thoughts jotted down by someone feeling exhausted, anxious, and introspective. Every writer knows that personal notes do not equate to a final position, let alone complete facts.

However, the truly significant aspect of Brockman's diary lies not in its proof of any crime but in showing that they knew where the boundaries were. The early key figures of OpenAI were not embarking on commercialization completely unaware. They understood that the "non-profit" facade carried moral weight, recognized that Musk's early funding established a trust relationship, and knew that if they switched to another structure a few months later while still claiming to be committed to non-profit, it would appear dishonest.

Knowing does not equal stopping.

Brockman revealed in court that the value of the OpenAI shares he held was nearly $30 billion.

Although this figure is not cash nor wealth in hand. It represents the stock value based on valuation, dependent on the company's prospects and transaction structures. But the symbolic significance is already substantial. Someone who once worried about moral boundaries in private writing was later in court, asked about the value of their OpenAI shares being nearly $30 billion. The public mission and personal wealth were placed on the same table at that moment.

Brockman, like many key figures in excellent organizations, is intelligent, engaged, capable, possesses a sense of shame, and also convinces himself bit by bit to continue moving forward.

The most complex part of OpenAI lies here. It is not a group of bad people conspiring to destroy ideals. It is more like a group of smart individuals finding reasons at every juncture to keep advancing, ultimately dragging the initial promise into a set of machines they themselves may not fully control.

And at the center of that machine is Altman.

Altman's Trust Debt

Sam Altman was interrogated in this trial not only about the veracity of certain statements. Musk's side was genuinely attacking his qualification to rule.

In closing arguments, Musk's lawyer Steven Molo placed Altman's integrity issues at the center. He told the jury that Musk, Sutskever, Murati, Toner, and McCauley—the five people who had worked with Altman for many years—called him a "fraud."

These five names are more important than the accusations themselves.

Musk is the opponent and can be seen as having a conflict of interest. But Sutskever is a co-founder and former chief scientist of OpenAI; Murati was once CTO and briefly filled in as interim CEO in 2023; Toner and McCauley are former board members. They are part of the internal power structure of OpenAI.

We cannot simply say Altman is a good or bad person.

The feelings towards Altman within OpenAI are clearly complex. He can push the organization to global prominence while making some core figures uneasy. He possesses strong organizational abilities, fundraising skills, media acumen, and political instincts, which have brought the company to its current position.

When OpenAI's board dismissed Altman in 2023, the official reason given was his "lack of consistent and candid communication" with the board. Days later, Altman returned. In 2024, OpenAI released a summary of the WilmerHale investigation, acknowledging a breakdown of trust between the former board and Altman, but also noting that the board acted too hastily, failing to provide proper notice to key stakeholders and not fully investigating or giving Altman a chance to respond.

These stories together form Altman's true trust debt.

He is not a traditional hero. He has the face of a Silicon Valley mogul: he can talk about missions, secure funding, organize talent, manage media, negotiate with large companies, and transform a lab into a world-class company.

The stronger his capabilities, the bigger the problems: If a company guarantees to the world "we want to benefit all humanity" based on his personal credit, then his credibility is no longer just a matter of private character but rather a public governance issue.

Altman had his rebuttals in court. He claimed Musk had attempted multiple times to have Tesla absorb OpenAI, which did not align with OpenAI's mission. He also stated that OpenAI had actually created immense charitable value.

This is the difficulty of OpenAI. It can claim to still be controlled by non-profit governance, or that commercialization allows the non-profit to have greater value; but ordinary people, upon hearing this, find it hard not to ask: If the public mission must rely on a company with a massive valuation and a powerful CEO to be upheld, then is it really a mission, or a trust loan?

The board had previously attempted to reclaim this loan in 2023. It failed.

Mission Loses to Reality

The OpenAI board does not have no power.

On paper, the non-profit board holds the mission oversight power. When OpenAI LP was established in 2019, it explained to the public that this was a capped-profit structure, with employee and investor returns capped, and any excess going to the non-profit, while remaining under the non-profit's control. This design seemed like a compromise, allowing for financing without completely relinquishing the mission.

The issue is that reality has developed far faster than the bylaws.

Since 2019, OpenAI's ties to Microsoft have deepened significantly. Microsoft provided funding, cloud services, and supercomputing, and gained commercialization rights. Court materials show that a large amount of OpenAI's IP and employees transitioned to the profit-making entity. By the time of the ChatGPT era, OpenAI was no longer just a research organization but a commercial system connecting users, customers, developers, cloud resources, investors, and global competition.

This kind of system cannot simply be stopped by pressing a button.

Microsoft CEO Satya Nadella was asked in court about Microsoft's $13 billion investment in OpenAI, and the potential return of about $92 billion if successful. His response was essentially that if the pie grows, the non-profit will also benefit.

This logic is typical: commercialization is not a departure from the mission but rather an expansion of the mission's funding sources.

However, in the same set of testimonies, Nadella's text messages with Altman regarding the launch of the paid version of ChatGPT were also mentioned. Nadella asked when the paid version would be released, and Altman replied that there wasn't enough computing power, and the experience wasn't good enough, but Nadella was impatient, stating the sooner the better.

Once OpenAI and Microsoft became intertwined, the rhythm of products, customer commitments, computational restrictions, and business returns became entangled. The board can discuss the mission, but Microsoft needs to ensure customer experience; the board can be concerned about safety issues, but users and enterprises are already using it; the board can dismiss the CEO, but employees, investors, partners, and public opinion will swiftly push back.

Nadella's perspective on the 2023 board crisis is also crucial. He stated he did not receive a clear reason for Altman's dismissal and criticized the board's handling as "amateur city." More importantly, he was already prepared to allow Altman and other employees to come to Microsoft if they could not return to OpenAI.

This is reality. The non-profit board seems to hold the steering wheel, but the engine, gas pedal, fuel, and passengers on board are not solely under its control. When an AI company is already linked to huge valuations, cloud providers, corporate clients, employee options, and global users, it is very difficult for a board representing the mission to genuinely slam on the brakes.

The bigger the AGI narrative, the larger the computational bills; the larger the computational bills, the more reliance there is on cloud giants; and the more reliance on cloud giants, the less likely it is that the mission can only be protected by bylaws.

In the AI era, computing power is not merely a backend resource. Computing power itself is power. Whoever provides the computing power participates in defining how fast a company can move, where it can go, and who it serves. Whoever can bear the costs of training failures can demand a share of the rewards after success. Whoever can ensure continuous contracts with corporate clients will have more voice in crises than the board.

This trial has helped us see the whole picture clearly; it tells us that it is not a specific person who has destroyed the ideal. If ideals do not have a sufficiently solid institutional body, they will inevitably grow a skeleton of reality.

That skeleton may not necessarily be evil, but it will certainly no longer be simple.

Users Are Not Bystanders

Musk, Altman, Brockman, Nadella, are names very distant from our lives. Claims of damages in the hundreds of billions, nearly $30 billion in stock value, $13 billion in investment, $92 billion in potential return—these figures are so vast that they become distorted. Ordinary people, sitting in offices, squeezing onto subways in the morning and scrolling through TikTok in the evening, may find their relationship with AI is simply opening an app to ask: Help me revise a proposal, write some code, translate an email.

But here's the issue.

OpenAI is no longer a distant laboratory. Its models are entering writing, translation, programming, searching, customer service, education, office software, and corporate processes. An ordinary person may not know whether OpenAI is an LP, LLC, or PBC, nor may they care whether Altman or Musk tells a better story, but they are continuously engaging with AI.

Children will use it for homework, schools will need to decide how to handle AI-generated essays; programmers will have it write code, and companies will need to determine how to measure people's outputs; media professionals will use it to research, outline, and revise headlines, while readers will face an influx of content whose sources they can no longer distinguish; companies will integrate it into customer service and approval processes, and employees will find their time and performance being squeezed by the systems.

We once thought we were merely users. But users wield tools, and tools are also shaping users.

What a model can answer, what it cannot; what content is considered safe, what is deemed risky; which companies can access more powerful models, and which people can only use encapsulated versions; which languages, professions, regions, and knowledge are better supported, and which are roughly treated. These issues may seem technical, but they eventually come to rest in the lives of ordinary people.

Thus, the OpenAI trial is, in fact, a window. Through this window, people can see that the manufacturing site of future infrastructure is neither clean nor transparent. There are smart individuals, ideals, fears, ambitions, shares, cloud bills, boardroom confrontations, and some private documents that they never thought would be publicly read out loud.

Water, electricity, roads, schools, hospitals, search engines, mobile operating systems—once these elements enter daily life, they are no longer just commercial products. AI is stepping into this position as well. It may not yet be as stable as water and electricity, but it has started to be relied upon much like them. A person can choose not to use a certain chatbot, but it's very difficult to entirely avoid the workflows, information gateways, and organizational rules transformed by AI.

Regardless of who wins in the end, it is highly likely that ordinary users will continue to use AI the next day. Students will still ask it to revise essays, programmers will still have it fill in code, enterprises will still integrate it into their systems, and entrepreneurs will continue to build applications around the models.

However, the court has at least peeled back a layer of packaging. It tells us that the AIs entering daily life are not sprouting from a transparent, stable, and purely public-spirited machine. They emerge from a specific group of individuals, a set of complex contracts, cloud computing bills, a board coup, some private diaries, and a struggle for control.

This is not a story that can simply be summed up with "capital corrupts ideals." The more authentic and unsettling reality is that AI is becoming a fundamental infrastructure for ordinary people, but its steering wheel is still in the hands of a few.

When the future begins to be shaped into products, ordinary people cannot just be users.

The content of this article’s trial was based on testimony, closing arguments, and the statements of both sides concerning motives and responsibility judgments, except for organizational structure and historical facts confirmed by public documents; as of May 15, 2026, the court has not issued a final ruling.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。