Introduction

In the past year, research on world models initially focused on representation learning and future prediction. The model first understands the world and then internally deduces future states. This approach has produced a series of representative results. V-JEPA 2 (Video Joint Embedding Predictive Architecture 2—a set of video world models released by Meta in 2025) was pre-trained on over 1 million hours of internet video and combined with a small amount of robot interaction data, showcasing the potential of world models in understanding, predicting, and zero-shot robotic planning.

However, a model predicting does not equate to the model handling long tasks. When faced with multi-stage control, the system usually encounters two pressures. One is that prediction errors accumulate continuously during long rollouts (consecutive multi-step deductions), making it easier for the entire path to deviate from the target. The other is that the action search space expands rapidly with the horizon (planning distance), resulting in continuously rising planning costs. HWM did not rewrite the underlying learning path of the world model but added a hierarchical planning structure on top of the existing action-conditioned world model, allowing the system to first organize stage paths and then handle local actions.

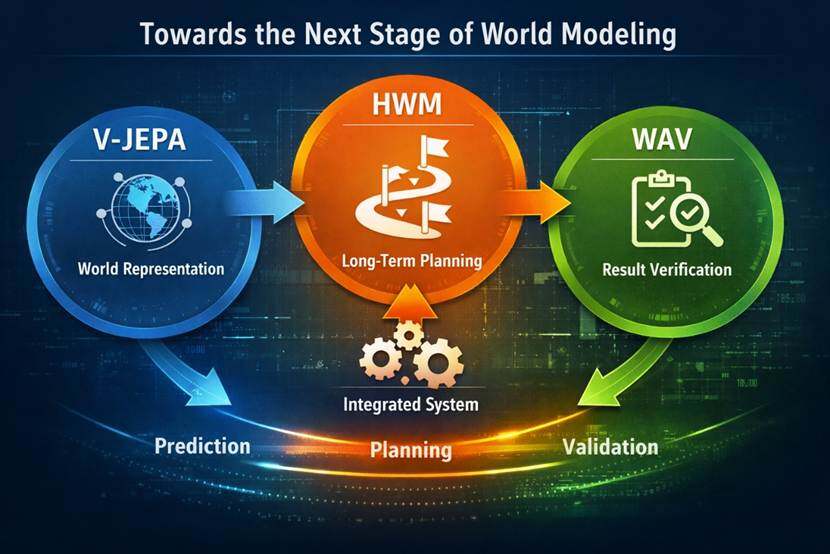

Technically, V-JEPA 2 (https://ai.meta.com/research/vjepa/) leans more towards world representation and basic prediction, HWM leans more towards long-term planning, and WAV (World Action Verifier: Self-Improving World Models via Forward-Inverse Asymmetry, https://arxiv.org/abs/2604.01985) leans more towards the model's ability to recognize and correct its own prediction distortions. The three lines of research are gradually converging. The focus of world model research has shifted from simply predicting the future to how to transform predictive capabilities into executable, amendable, and verifiable system capabilities.

1. Why Long-Term Control Remains a Bottleneck for World Models

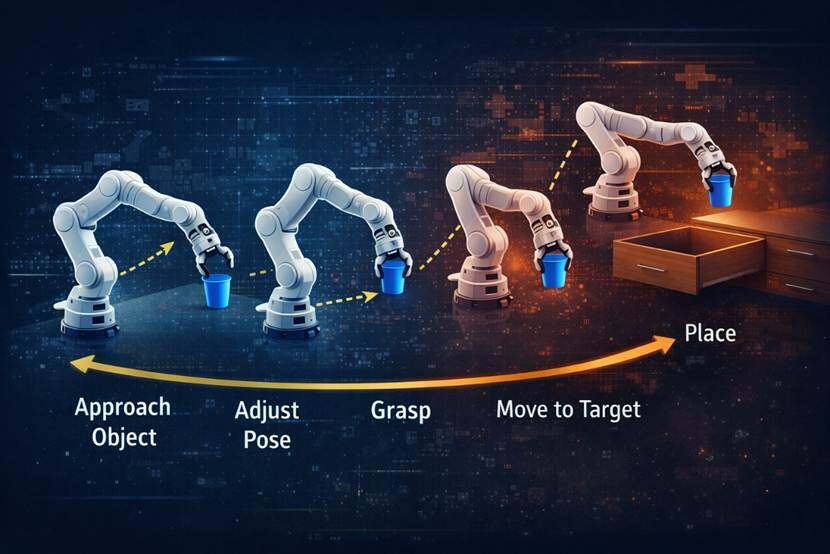

The difficulty of long-term control becomes clearer when placed within robotic tasks. Taking robotic arm operations as an example, picking up a cup and placing it in a drawer is not a single action, but a series of continuous steps. The system must approach the object, adjust its posture, complete the grasp, move to the target position, and then handle the drawer and placement. As the chain lengthens, two problems arise simultaneously. One is that prediction errors continuously accumulate along the rollout, and the other is that the action search space quickly expands.

What the system lacks is often not local prediction capabilities but the ability to organize distant goals into stage paths. Many actions may appear to deviate from the target when viewed locally, but in reality, they are necessary intermediate steps to achieve the goal. For example, raising the arm before grasping or stepping back a bit to adjust the angle before opening the drawer.

In demonstration tasks, world models have already been able to provide coherent predictions. However, once in real control scenarios, performance begins to decline, and problems emerge. The pressure comes not only from the representation itself but also from the planning layer still being insufficiently mature.

2. How HWM Restructures the Planning Process

HWM breaks the originally single-layer planning process into two layers. The upper layer is responsible for broader stage direction over longer time scales, while the lower layer is responsible for local execution over shorter time scales. The model does not plan according to a single rhythm but instead plans according to two different temporal rhythms simultaneously.

Single-layer methods typically need to directly search the entire action chain in the lower layer action space when handling long tasks. The longer the task, the higher the search cost, and the more easily prediction errors diffuse along multiple step rollouts. After HWM splits the process, the high layer only handles route selection at a longer time scale, while the low layer only handles the completion of the current action segment, breaking the entire long task into multiple shorter segments, thus reducing planning complexity.

There is also a key design here: the high-level action does not simply record the difference between two states; it uses an encoder to compress a segment of low-level actions into a higher-level action representation. For long tasks, the key is not just how much difference there is between the start and end points but how the intermediate steps are organized. If the high level only looks at displacement differences, it is easy to lose the path information in that segment of action chain.

HWM embodies a hierarchical task organization approach. When facing a multi-stage task, the system no longer unfolds all actions at once but first forms a rough stage path and then executes and adjusts them segment by segment. With this hierarchical relationship entering the world model, predictive capabilities will begin to more stably transform into planning capabilities.

3. From 0% to 70%, What Do the Experimental Results Indicate?

In the real-world grab and place task set up in the paper, the system only receives the final goal condition and does not provide manually broken down intermediate goals. Under these conditions, HWM's success rate reaches 70%, while the single-layer world model's success rate is 0%. What was once an almost impossible long task has become a highly probable achievable result after introducing hierarchical planning.

The paper also tests simulation tasks like object pushing and maze navigation. The results show that hierarchical planning not only improves the success rate but also reduces the computational cost during the planning phase. In some environments, the computational cost of the planning phase can be reduced to about a quarter of the original amount while maintaining a higher or comparable success rate.

4. From V-JEPA to HWM to WAV

V-JEPA 2 represents the route of world representation. V-JEPA 2 was pre-trained on over 1 million hours of internet video and combined with less than 62 hours of robot video for post-training (targeted training after pre-training), resulting in a latent action-conditioned world model (a world model predicting in the abstract representation space combined with action information) usable for understanding, predicting, and planning for the physical world. It demonstrates that a model can obtain world representations through large-scale observation and transfer this representation to robotic planning.

HWM is the next step. The model already possesses world representation and basic prediction capabilities, but once it enters multi-stage control, problems of error accumulation and search space expansion explode. HWM does not change the underlying representation learning route but adds a multi-time-scale planning structure on top of the existing action-conditioned world model. It addresses how the model can organize distant goals into a set of intermediate steps and then push forward segment by segment.

WAV further focuses on verification capabilities. For world models to enter strategy optimization and deployment scenarios, they cannot merely predict; they must also be able to detect where they easily become distorted and correct accordingly. It concerns how the model checks itself.

V-JEPA leans towards world representation, HWM leans towards task planning, and WAV leans towards result verification. Although they focus on different points, the overarching direction is consistent. The next phase of world models is no longer just internal prediction but gradually linking prediction, planning, and verification into a cohesive system capability.

5. Moving from Internal Prediction to Executable Systems

Many past works on world models were closer to enhancing the continuity of future state predictions or improving the stability of internal world representations. However, the current research focus has started to change, as the system needs to form judgments about the environment, convert those judgments into actions, and continue correcting the next steps after the results come out. To get closer to real deployment, it is necessary to control error propagation, compress search ranges, and reduce reasoning costs in long-term tasks.

Such changes will also impact AI agents. Many agent systems can already complete short-link tasks, such as calling tools, reading files, and executing several step instructions. However, once the task becomes a long chain, multi-stage, and requires mid-way replanning, performance declines. This is essentially no different from the challenges in robotic control; both stem from insufficient high-level path organization capabilities, leading to a disconnect between local execution and overall objectives.

The hierarchical thinking provided by HWM, where the high layer is responsible for paths and stage goals and the low layer is responsible for local actions and feedback processing, with an overlay of result verification, will likely appear in more systems in the future. The next stage of world models will no longer focus solely on predicting the future but will also organize prediction, execution, and correction into a runnable path.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。