Author: Claude, Shenchao TechFlow

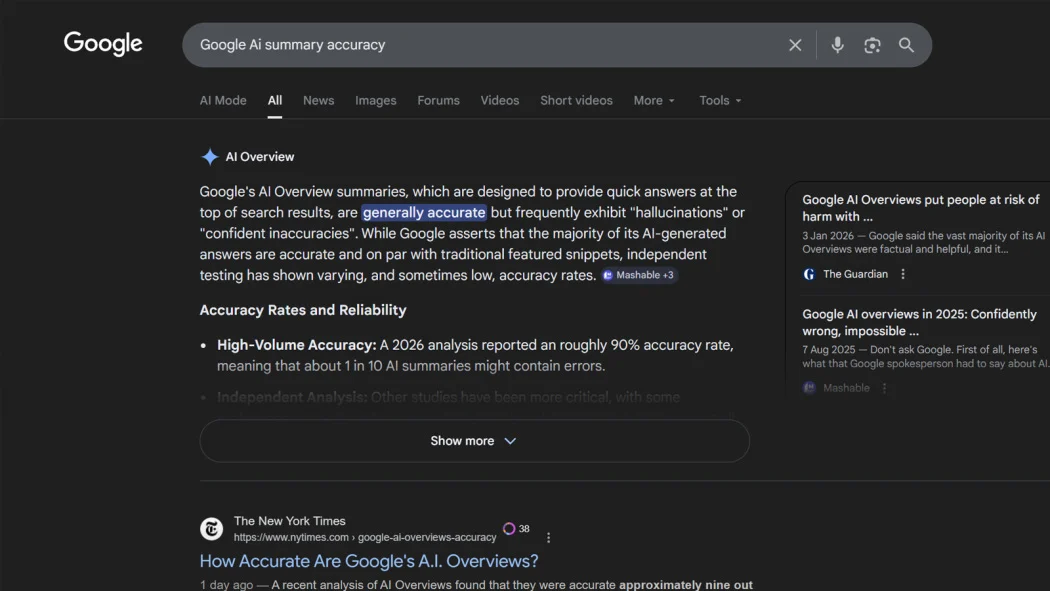

Shenchao Guide: The latest test conducted by The New York Times in collaboration with AI startup Oumi shows that the accuracy of Google Search's AI Overview feature is approximately 91%, but considering Google's annual processing of 500 trillion searches, this means that tens of millions of incorrect answers are generated every hour. Even more troublesome is that even if the answers are correct, more than half of the referenced links cannot support the conclusions.

Google is delivering misinformation to users on an unprecedented scale, and most people are completely unaware.

According to The New York Times, AI startup Oumi was commissioned to use the industry-standard test SimpleQA developed by OpenAI to assess the accuracy of Google's AI Overviews feature. The test covered 4,326 search queries, conducted in October last year (driven by Gemini 2) and again in February this year (upgraded to Gemini 3). The results showed that Gemini 2 had an accuracy of about 85%, which was improved to 91% with Gemini 3.

91% sounds good, but put into the context of Google's scale, it is another matter. Google processes about 500 trillion search queries each year, and with a 9% error rate, AI Overviews generates over 57 million inaccurate answers every hour, nearly 1 million per minute.

Correct answers, but wrong sources

More concerning than the accuracy rate is the issue of "unanchored" source citations.

Data from Oumi shows that during the Gemini 2 era, 37% of correct answers had the issue of "unverified citations," meaning that the links attached to the AI summaries did not support the information provided. After upgrading to Gemini 3, this proportion did not decline but instead jumped to 56%. In other words, while the model provides correct answers, it increasingly fails to "submit the homework."

Oumi CEO Manos Koukoumidis's questioning gets to the heart of the matter: "Even if the answer is correct, how do you know it is correct? How do you verify it?"

The extensive use of low-quality sources by AI Overviews exacerbates this issue. Oumi found that Facebook and Reddit were the second and fourth largest sources cited by AI Overviews, respectively. In inaccurate responses, Facebook was cited 7% of the time, higher than the 5% in accurate responses.

A fake article by a BBC reporter successfully "poisoned" within 24 hours

Another serious flaw in AI Overviews is its susceptibility to manipulation.

A BBC reporter tested a deliberately fabricated fake article, and within 24 hours, Google's AI summary presented the false information as fact to users.

This means that anyone familiar with the system's operational mechanisms could "poison" AI search results by posting false content and boosting its traffic. Google spokesperson Ned Adriance responded that the search AI features are built on the same ranking and security mechanisms as blocking spam information, and stated that "most examples in the tests were unrealistic queries that people would not actually search for."

Google rebuttal: the test itself has issues

Google raised several doubts about Oumi's research. A Google spokesperson mentioned that the study "has serious flaws," including that the SimpleQA benchmark itself contains inaccurate information; Oumi used its own AI model HallOumi to evaluate another AI's performance, which may introduce additional error; and that the test content does not reflect real user search behavior.

Google's internal testing also showed that when Gemini 3 operates independently outside the Google search framework, the rate of producing false outputs can be as high as 28%. However, Google emphasized that AI Overviews leverages the search ranking system to enhance accuracy, performing better than the model itself.

Nevertheless, as pointed out by PCMag's commentary, the logical paradox is: if your defense is that "the report pointing out our AI inaccuracies also used potentially inaccurate AI," this probably does not enhance user confidence in the accuracy of your product.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。