Text | Lin Wanwan

In 1876, the Philadelphia World Expo. Brazilian Emperor Pedro II picked up the telephone invented by Bell and exclaimed when he heard a voice on the other end, "Oh my, it can talk!"

One hundred and fifty years later, on March 18, 2026, at the San Jose Convention Center. Huang Renxun, dressed in a black leather jacket, stood on the stage of the GTC conference and made a surprising remark.

"In ten years, NVIDIA will likely have 75,000 employees. They will be very, very busy because they will work with 7.5 million AI agents."

The audience laughed.

75,000 people, 7.5 million agents, 1:100.

Huang Renxun himself laughed and added, "They will work around the clock. I hope our people don’t have to compete with them."

The applause faded, and that number was overshadowed by fancier chip launches and collaboration agreements that day. But if we pull it out again and think about it for a moment, it might be one of the most important statements of the entire conference.

Not only Huang Renxun. Three months prior, another individual described the same kind of future more specifically.

In January 2026, at CES in Las Vegas, McKinsey CEO Bob Sternfels sat on stage reporting numbers.

"We currently have 40,000 human employees and about 25,000 AI agents." Less than two years ago, that number was in the thousands. Those 25,000 agents generated 2.5 million charts over the past six months.

2.5 million charts. This work was previously done by newly hired analysts. Twenty-three or twenty-four years old, with the halo of world-famous universities, aligning the axes at three in the morning.

That was the starting point for every McKinsey newcomer, trading the most mechanical labor for a ticket to the path towards partnership.

Today, the first half of this ticket has been taken over by agents. Sternfels said: AI has increased the workload of certain positions by 25%, while reducing others by 25%. The company has been neatly split in half, with one half expanding and the other contracting.

The story of NVIDIA and McKinsey is telling the same thing.

In a 1:100 world, the work is done by token-driven agents, while humans are the interfaces connected to the agents.

The external remote control is not in your hands

During the week of GTC, Huang Renxun was a guest on the All-In Podcast, where he made an even more powerful statement.

"Suppose you have an engineer with an annual salary of $500,000. If he does not consume at least $250,000 in tokens, I would be very worried."

The host followed up asking whether NVIDIA was spending $2 billion buying tokens for the engineering team, to which Huang Renxun replied, "We are trying our best."

An engineer who does not burn tokens is not worth the full $500,000.

NVIDIA's approach is straightforward: include tokens in the compensation package. Huang Renxun stated in his keynote speech at GTC that in the future, every engineer at NVIDIA will have an annual token budget of about half their base salary.

A base-salary engineer in the tens of thousands of dollars will receive an additional allocation of computational power equivalent to half of their base salary, with one-third of the total package being pure fuel.

An individual with a full token budget essentially has access to over a dozen AI agents writing code, running tests, searching literature, and conducting simulations around the clock. Someone only with a free API quota is still relying on their hands to type. The resumes of the two may look identical, but their output may differ by 5 to 10 times.

This is no longer a theory in Silicon Valley.

In March of this year, Business Insider reported a change: engineers began asking during interviews, "What is the token budget for this position?" Theory Ventures partner Tomasz Tunguz referred to the token budget as the "fourth pillar" of engineer compensation, alongside base salary, bonuses, and equity. OpenAI President Greg Brockman phrased it more directly: how much computational power you can access will increasingly determine your overall productivity.

Huang Renxun noted in his GTC speech, "How many tokens come with my position? This has already become a recruiting tool in Silicon Valley."

In the 1950s, the wages of auto workers in Detroit ranked among the highest in the U.S. What truly enabled them to live a middle-class life was the assembly line invented by Henry Ford. Workers stood on the line, with the line moving while they remained stationary, and the output of each individual was amplified dozens of times by mechanical arms. A Detroit worker's standard of living far exceeded that of contemporary craftsmen; their skills were not necessarily better, but they were standing on a much thicker assembly line.

The token budget of 2026 is the assembly line of 1950.

But there is a difference.

Detroit workers leaving Ford could go to General Motors or Chrysler; the assembly line was available everywhere. Unions could negotiate with management for better speed and safer conditions.

The token budget is different. The day the company hands it to you, you are Superman; the day it is taken back, you revert to being a passerby. Stocks can be cashed out and taken with you, skills can follow you when you change jobs. The token budget is nothing, just an add-on, and the switch is in the company's hands.

Silicon Valley has already coined a new term to describe this situation, called "GPU drought."

Top AI researchers changing jobs now cite salary differences as the second most important factor, while computational power ranks first. Unable to run experiments or deploy agents, abilities are capped by quotas. "How many tokens do you give me?" sometimes takes precedence over stocks. Stocks are a potential future payment that can drop, while the token budget represents productivity that can be realized today.

Those who do not use AI are simply out of the game.

Goldman Sachs estimates that AI could automate 25% of hours worked in the U.S. Mercer’s survey indicates that 65% of executives expect that 20% to 30% of employees will be reassigned due to AI. When these two figures are combined, the conclusion is clear: those with tokens will have explosive output, while those without tokens will be optimized away.

The dividing line is the token quota, and the relationship with human capability is becoming increasingly tenuous.

Token throughput is valuation

Personal value is determined by token quotas. What about companies?

In early March 2026, a Shanghai company named MiniMax released its first annual report since its listing. Annual revenue was $79 million, with an adjusted net loss of $250 million. Viewed through traditional financial metrics, this is a cash-burning small company with revenue only a fraction of Accenture's quarterly income.

But the capital market sees it differently.

MiniMax CEO Yan Junjie made a statement during the earnings call that was more important than the whole report: "The value of the company is determined by intelligent density multiplied by token throughput."

Token throughput is not revenue growth, nor user numbers, nor gross profit margins.

The data supporting this statement is robust. In February 2026, MiniMax's M2 series models had an average daily token consumption that increased six times compared to December two months prior. The token consumption in programming scenarios rose tenfold. On the AI model aggregation platform OpenRouter, MiniMax's M2.5 consumed 4.55 trillion tokens in two weeks, displacing all U.S. models and marking the first time a Shanghai company topped the global token consumption leaderboard.

The South China Morning Post reported this event using a phrase: "China's open-source models have ended a year-long market dominance by U.S. developers." What was the reason for this end? Token consumption. Whoever burns the most tokens is the winner.

This logic also applies to OpenAI. OpenAI's API platform processes 6 billion tokens per minute, having increased by twenty times in two years. The number of enterprise customers consuming over $100,000 per year has increased nearly sevenfold in a year. After breaking down the data, Barclays analyst Ross Sandler concluded that OpenAI's token consumption on the consumption side is more than twice that of Google Gemini.

Token consumption has become the hard currency for ranking AI companies.

Interestingly, this phenomenon also reflects internally within companies. The New York Times recently reported on a phenomenon called "tokenmaxxing": engineers at Meta and OpenAI are competing on internal leaderboards to see who consumes the most tokens.

Token budgets are becoming standard benefits, like free lunches and dental insurance a decade ago. An engineer working in Ericsson's Stockholm office told the New York Times that his spending on Claude might surpass his salary, but the company covers it.

A recent article in TechCrunch calculated that an engineer might use about 10,000 tokens to write an article in the afternoon, while an engineer running an agent cluster could burn through millions of tokens in a day without typing a word.

Two years ago, the price for one million tokens was $33. Now it stands at nine cents. It has decreased by 99.7%. The cheaper the price, the more tokens are consumed. The higher the consumption, the more indispensable it becomes.

Yan Junjie's forecast during the earnings call was that future market demand for tokens might grow by one or two orders of magnitude.

This is the new way of valuing a company in 2026. It doesn't matter how much you earn; it matters how many tokens are being burned. MiniMax lost $250 million, but the growth curve for its token throughput is steep, which the capital market is willing to bet on. You might liken it to YouTube in 2006, which had no revenue, but increasing bandwidth consumption, which prompted Google to pay $1.65 billion to acquire it.

Back then, YouTube was consuming bandwidth. Today, MiniMax is consuming tokens. The measurement unit has changed, but the logic remains the same.

Production capacity can wait, but debt cannot

Another event took place during the same week as GTC.

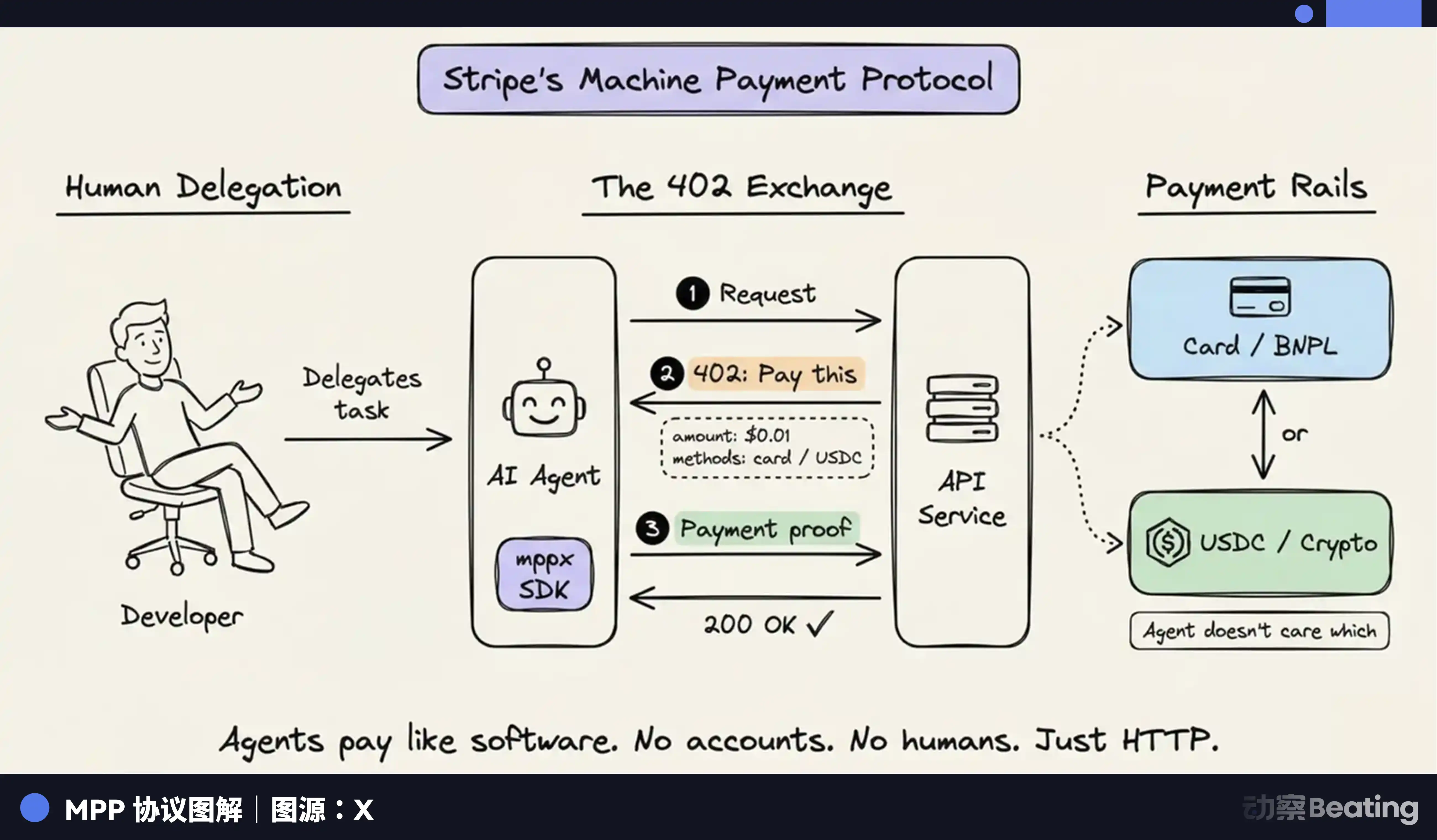

On March 18, Stripe announced the Machine Payments Protocol. In simple terms: AI agents can now spend their own money.

An agent needs a set of data and pays for it to download. It needs computing power to run inference and purchases it by the second. If it needs to call another agent's API, it checks out itself. The entire process does not require human confirmation. Visa has adapted credit card payments for this protocol, Coinbase has created an exclusive wallet for agents, and Mastercard is developing Agent Pay.

The consumption of tokens now has an additional source. Previously, it was only the scenario of "humans dispatching agents." Now, agents are also consuming tokens and using the money earned from tokens to buy more tokens. Stripe co-founder John Collison used a term: "torrent."

Huang Renxun provided a corresponding figure on stage: NVIDIA aims to increase its token generation rate from 22 million to 700 million, a 350-fold increase.

This is building an entire highway network, betting on exponential growth in traffic.

A $600 billion infrastructure bet requires one premise: the global consumption of tokens must be large enough to support a return on investment. This premise is currently just a hypothesis, and an expensive one at that.

In the last quarter of 2025, tech companies issued a record $108.7 billion in bonds. Entering 2026, the first few weeks saw another $100 billion. Morgan Stanley and JPMorgan estimate that, in the next few years, AI-related businesses may borrow a total of $1.5 trillion. According to Goldman Sachs' estimates, AI capital expenditures currently account for about 3% of U.S. GDP.

The first batch of people on Wall Street to sense risk have already begun buying insurance. The trading volume of credit default swaps is rising. Spending a few basis points on premiums is betting that these companies may not be able to pay back their debts. Citi's credit strategy head Daniel Sorid remarked at an investor meeting, "As a credit investor, facing a transformation of this magnitude, the need for such large capital investment inherently causes unease."

Google founder Larry Page made an even more extreme statement internally, repeatedly telling Google employees, "I would prefer to go bankrupt than to lose this race."

This precisely describes a prisoner’s dilemma: every giant is betting that their rivals will continue investing, so they cannot stop. Those who stop are directly out of the game.

The optimistic aspect has hard data. Token generation rates soaring by 350 times. Stripe just allowed agents to spend their own money. McKinsey expanded from thousands of agents to 25,000 in two years. If the agent economy takes off comprehensively, the growth curve of token consumption could indeed become exponential.

But there is one date that keeps many people awake at night: the cliff of contract renewal in the second half of 2026.

From 2024 to 2025, companies are spending "innovation budgets." CEOs need to state in earnings calls, "We are embracing AI," price sensitivity is low, and the results aren’t demanded to be harsh; the money spent is for showcasing. In the second half of 2026, the first batch of pilot projects will reach the contract renewal point. Innovation budgets will be used up, and the CTO will give up their seat at the table for the CFO. The CFO cares only about one number: ROI.

If a large number of pilots are cut, token terminal consumption will suddenly face a gap. The $600 billion capacity hammered out as investment will have data centers built, power connected, chips installed, turning into idle capacity.

This situation has happened in history.

In 2000, telecom companies spent a trillion dollars laying undersea fiber optic cables. The bubble burst, and 90% of those cables lay dark on the seabed for nearly a decade. It wasn’t until Netflix started streaming and the iPhone ignited mobile internet that the cables began to light up one by one. The cables were not laid in vain. Companies like Lucent, Nortel, and WorldCom that laid the cables all went bankrupt. The infrastructure remains, but the builders are gone.

In 2012, China's photovoltaic industry saw Wuxi Suntech and Jiangxi Saiwei drive component prices below global cost lines. This led to severe overcapacity and a brutal three-year industry washout. Demand later did arrive, and photovoltaic energy today is the fastest-growing energy source on Earth. However, Suntech went bankrupt, and Saiwei went bankrupt. The pioneers lay in the final segment of darkness before dawn.

After Bell invented the telephone, Western Union refused to buy the patent for $100,000. Ten years later, Western Union was willing to pay $25 million, but Bell would no longer sell. Thirty years later, the telephone network covered the entire United States. However, most of the small companies that laid the network did not survive to see the widespread adoption of the phone. The winner was AT&T, which later acquired everything through mergers and monopolies.

The story of infrastructure is always of this version. The direction is almost always correct, but the time lag can be deadly.

Back to tokens. The structure mentioned earlier—tokens become labor, humans become interfaces, token quotas define everything—holds as long as tokens are continuously, massively, and rapidly consumed. The tenfold output of engineers relies on token supply; cutting it would reduce them to zero. OpenAI's $840 billion valuation is supported by commitments of computational power; if the agreements terminate, it shrinks. The $600 billion infrastructure relies on terminal consumption growth; a slowdown in growth means excess capacity.

Each layer depends on the layer below. If consumption growth slows by two to three years compared to construction growth, the pricing for everyone in the entire chain will loosen.

What railroad are you relying on?

In 2023, having a card means power. In 2026, having tokens means power.

It sounds like a change of words, but the underlying changes are deeper than most realize.

GPUs are assets; once purchased, they are yours, locked in a machine room, inaccessible to others.

Tokens are flow. Your tenfold output, your high valuation, your bargaining chips at the negotiating table are all built on a continuous supply that does not belong to you. Once the tap is turned off, everything returns to zero.

When tokens become the real labor, humans become the interfaces atop the tokens. A good interface can maximize token value; judgment, aesthetics, experience—these things still exist. However, how much an interface can do primarily depends on how many tokens it is connected to.

Farmers in America in the 1870s discovered that growing good wheat was not enough; they had to be by the railroad. Craftsmen in the 1950s realized that no matter how good their skills were, they could not compete with the assembly line workers. Engineers in 2026 are discovering that writing code beautifully means nothing without a token budget; everything becomes idle.

When tokens transform into true labor, humans turn into interfaces. The quality of the interface matters, but how much the interface is worth primarily depends on who supplies its power.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。