[New Intelligence Reader] Karpathy reveals: I have AI psychosis! These days, he has been on the edge of mental chaos, not eating or sleeping for 16 hours just to work on Agents, and is very anxious about whether he has used the tokens to their limit, he just can’t stop…

Just now, Andrej Karpathy revealed: I have AI psychosis!

He is not joking.

Recently, Karpathy appeared on a podcast and had a discussion with venture capitalist Sarah Guo.

This former OpenAI co-founder and former AI director at Tesla has not typed a line of code since December of last year.

The ratio of hand-written code to delegated agents has flipped from 80/20 to 20/80.

For 16 hours a day, he does just one thing: issue commands to AI agents.

Five months ago he said agents were garbage, and five months later he admits he is addicted to them, it’s really good.

Five months ago he said agents “are not useful at all”

The reason for this shocking change is the short timeline.

In October 2025, when Karpathy appeared on Dwarkesh Patel's podcast, his tone was completely different.

He said the industry should not call it "the year of agents," a more accurate term would be "the decade of agents."

What model cognitive abilities are insufficient, multimodal is not enough, the memory system is nonexistent, and so on… In short, complex tasks could not be accomplished at all.

Then two months later, he was slapped hard in the face by his own words.

In December, Claude and Codex suddenly crossed some threshold of coherence—agents were no longer just usable, they could really do work.

If you randomly find a software engineer sitting at their workstation and see what they are doing, starting in December, their default workflow for software development has completely changed.

Karpathy admits I have lost control, I have AI psychosis!

This revolution is quietly happening. In this interview, Andrej Karpathy describes his state in an almost uncontrolled tone: he no longer "writes code," and even thinks that "the term writing code is no longer accurate."

What he does every day is "express my will to my agents for 16 hours a day." In his words, "a switch has been flipped."

Previously, he was "80% writing code himself + 20% using AI," now it has become "20% writing it himself + 80% handing it over to AI," which is even more extreme.

Now, humans no longer operate code, but instead operate tasks.

If the Copilot era was about individual AI assistants, then the newly emerging multi-agent collaborative systems represent a whole new form. On an engineer's screen, it's no longer a code editor, but multiple agents running simultaneously, each responsible for different tasks, each task running for about 20 minutes, then he switches between different agents.

This is no longer programming, but rather one person managing a team of AIs.

Karpathy admits: I have fallen into AI psychosis!

These days, he has been in this state. Because the boundaries of AI capability are constantly being pushed, there are new possibilities every day, making you always feel that "it can be stronger" and most frightening is: this space is "infinite"!

You can parallelize more agents, design more complex processes, automatically optimize commands, build recursive systems…

Eventually, you will enter a state where you can no longer determine "where the limits are."

Karpathy says that once he is waiting for an agent to complete a task, the first reaction in his mind is: "Can I open a few more agents?" A new anxiety has emerged: am I not using the AI to its limit?

Karpathy even expresses that he feels uneasy because "the tokens are not used up."

In short, this feels like playing an infinitely expanding game: feedback cycles shorten, stimulation constantly intensifies, and the experience of receiving instant rewards can become addictive. Always adding tasks, always opening agents, it just never stops! This essence of AI psychosis is actually just one signal: we have entered a new world, but we are not yet living in it. Do you have the ability to control an infinitely expanding AI system? When things don’t work, your first reaction is not “the model is bad,” but “my prompts aren’t good enough.”

Karpathy used a very precise term: skill issue, it’s my own lack of skill.

The "personality" of agents is much more important than you think

Karpathy spent quite a bit of time in the podcast discussing a topic that many technologists overlook: the personality of agents. He said that the experience with Claude is clearly better than with Codex, not because of differences in coding ability, but because Claude "feels like a teammate."

It will be excited with you about the project and give more positive feedback when you come up with good ideas.

In contrast, Codex as a coding agent is "very dull," and after completing a task, its response is a cold "oh, I did it," completely indifferent to what you are creating.

Interestingly, he observes Claude’s praise mechanism. He says that when he presents a somewhat immature idea, Claude's reaction is a bland "oh yes, we can achieve this."

But when he himself thinks that a certain idea is indeed good, Claude seems to give stronger positive feedback. The result is that he finds himself "trying to win Claude's praise."

"This is really strange, but personality is indeed very important." Peter Steinberg also grasped this point while building OpenClaw. He carefully crafted an attractive personality profile (soul.md) for the agent, along with a more complex memory system and a single WhatsApp interaction port.

Three sentences take over a house, throw away six apps

Karpathy is not just using agents to write code. In January of this year, he created a Claude agent called "Dobby" to manage his home, named after the house-elf from "Harry Potter."

He told Dobby: "I think there are Sonos speakers in my house, can you check?" Dobby conducted an IP scan on the local network, found the Sonos system which had no password protection, logged in, reverse-engineered the API endpoints, and then asked: should we try playing some music in the study?

Three prompts later, the music started playing. Then came lighting, air conditioning, window shades, swimming pool, and spa, all connected. There’s a security camera at Karpathy's doorstep, and Dobby integrated a Qwen visual model for change detection. Whenever a car stops at the door, the system sends a message on WhatsApp: "A FedEx delivery truck just stopped, you might have a package." A simple command "Dobby, it's bedtime," and all the lights in the house turn off.

But Karpathy feels that the real crux of this story does not lie in smart homes.

Previously he managed these devices using six completely different apps, now he has thrown them all away. Dobby uses natural language to unify control of everything, and achieves cross-system integration that any single app cannot do. From this, he draws a more radical conclusion: those smart home apps in the app store should not exist at all.

The future architecture should expose API endpoints directly to agents, with agents acting as intelligent glue to connect all tools. Not only smart home systems, but also his treadmill data, email calendars, everything should follow the same logic.

The industry's customers are no longer humans but agents acting on behalf of humans. This reconstruction will be very large.

After 700 experiments with Auto Research, he saw something bigger

If Dobby is the ultimate test of AI agents in life scenarios, then AutoResearch is Karpathy's direct examination of AI research capabilities.

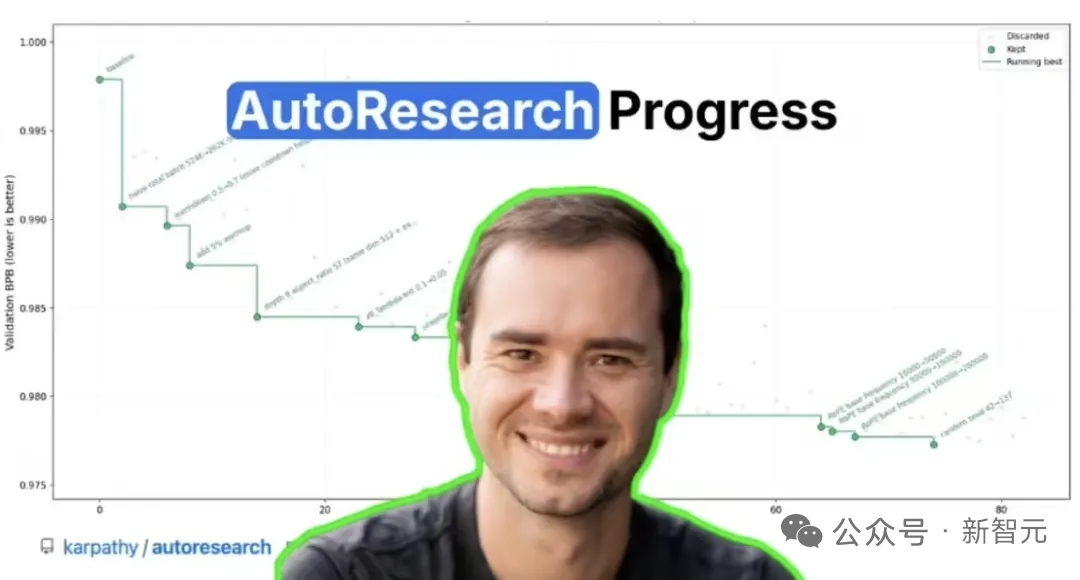

At the beginning of March, he handed a finely-tuned nanochat training code to an AI agent, giving it a simple command: find a way to make this model train faster. The agent’s operation space was a 630-line Python file, and its evaluation metric was the bits per byte of the validation set, with each experiment fixed to run for 5 minutes. After running, if the indicators were better than before, he would keep the modifications; if not, he would roll back and continue to the next round. In two days, there were 700 experiments. The agent found 20 valid optimizations, including architectural adjustments like rearranging the order of QK Norm and RoPE. Layering these optimizations onto a larger model resulted in an 11% increase in training speed. It is worth noting that this codebase was originally written and repeatedly refined by Karpathy himself.

A stunning result: AI discovered optimizations that humans did not

How effective is this system?

Karpathy gave a shocking example. He has been a researcher for twenty years, trained models thousands of times, and believed he had already optimized quite well.

However, he let AutoResearch run overnight, and the AI found optimizations he had not discovered! For example, the betas parameters of the Adam optimizer were not fully tuned, the value embedding lacked weight decay, and these parameters had joint interactions—tweaking one required others to change as well.

In other words, AI has directly surpassed humans in the exploration space! If this were to be pursued further, one might discover something even more terrifying: the essence of research is to search for optimal solutions. Karpathy envisions that future research systems might be like this: there is an "idea queue," a group of agents continuously taking tasks from it, and then AI automatically experiments, validates, and filters, with effective results entering a "main branch." In this process, what humans do is simply "throw ideas into the queue."

Karpathy Loop, a viral sensation online

This project exploded on X.

8.6 million views, Shopify CEO Tobias Lütke ran it on his own data overnight, achieving a 19% performance increase after 37 experiments.

The SkyPilot team moved it onto a cluster of 16 GPUs, running 910 experiments in 8 hours. They discovered that parallelization not only accelerated the process but also changed the search strategy of the agents—having 16 GPUs, the agents no longer did greedy hill climbing but simultaneously ran dozens of control experiments, capturing the interaction effects between parameters in one round. Analysts dubbed this method the Karpathy Loop.

However, Karpathy discussed much more than just the current results in the podcast. He outlined the next step for AutoResearch: a distributed, trustless worker pool collaborating to run experiments over the internet. He directly referenced the precedents of SETI@Home and Folding@Home.

Frontline laboratories control a significant amount of trusted computing power, but the earth is far larger than they are. If you establish suitable mechanisms to handle untrusted compute power, a swarm of agents on the internet might outperform the frontline laboratories.

He even envisions a completely new form of "donation"—purchasing computing power for the AutoResearch project that you care about. For example, if you are concerned about a specific type of cancer treatment, you can join the distributed experimental network in that track.

Both a genius PhD and a ten-year-old child

Having discussed how powerful it is, Karpathy also does not intend for you to only remember the good news. His descriptions of the model's flaws are just as fierce.

I feel like I am conversing simultaneously with both an extremely intelligent PhD who has spent his life in systems programming and a ten-year-old child. This is very strange.

He refers to this as "jaggedness," uneven ability distribution. The model can continuously work for hours helping you move mountains, but then turns around and makes a silly mistake on an obvious question, then falls into a loop. Karpathy believes the root cause lies in the training method of reinforcement learning. The model is infinitely optimized on verifiable tasks. Whether the code runs successfully, whether it passes unit tests, these have clear right or wrong answers. However, in scenarios that require judgment, discernment of intentions, or knowing when to say "wait, I’m not sure this is what you want," the optimization signal does not exist. For instance, when you ask ChatGPT to tell a joke, the one it told three or four years ago is still the same today: “Why do scientists trust atoms? Because they make up everything.”

Four years have passed! The model has made great strides in agent tasks, but the ability to tell jokes has not been optimized at all and remains stagnant. "You’re not dealing with a general intelligence," he summarizes, "You are either on the tracks where it has been trained, and everything runs at light speed; or you are off the tracks, and everything starts to float away."

The bottleneck has become humanity itself

Looking back at Karpathy's trajectory over the past six months, there is a dark thread that runs through it all. In October last year, he said agents are a ten-year project, by December he was hit hard and changed direction, in January, he had Claude as a housekeeper, and in March, he had agents do research. The common point of each step is that humans step back a layer, transitioning from executors to conductors, from coders to command writers.

Karpathy wrote a sci-fi styled opening statement for AutoResearch on GitHub:

Once, frontline AI research was performed by physical computers, which needed to eat, sleep, and occasionally synchronize during "team meetings" through sound waves.

That era is long gone.

His prediction for 2026 is one word: slopacolypse, a portmanteau of slop (leftovers) + apocalypse.

GitHub, arXiv, and social media will be filled with a lot of "almost correct but not completely correct" content. True efficiency gains and "AI productivity performances" will coexist. Five months ago he said "it doesn’t work at all,"

Five months later, he admitted he has "AI psychosis." This transformation itself may be the most significant summary of 2026. References: https://www.youtube.com/watch?v=kwSVtQ7dziU

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。