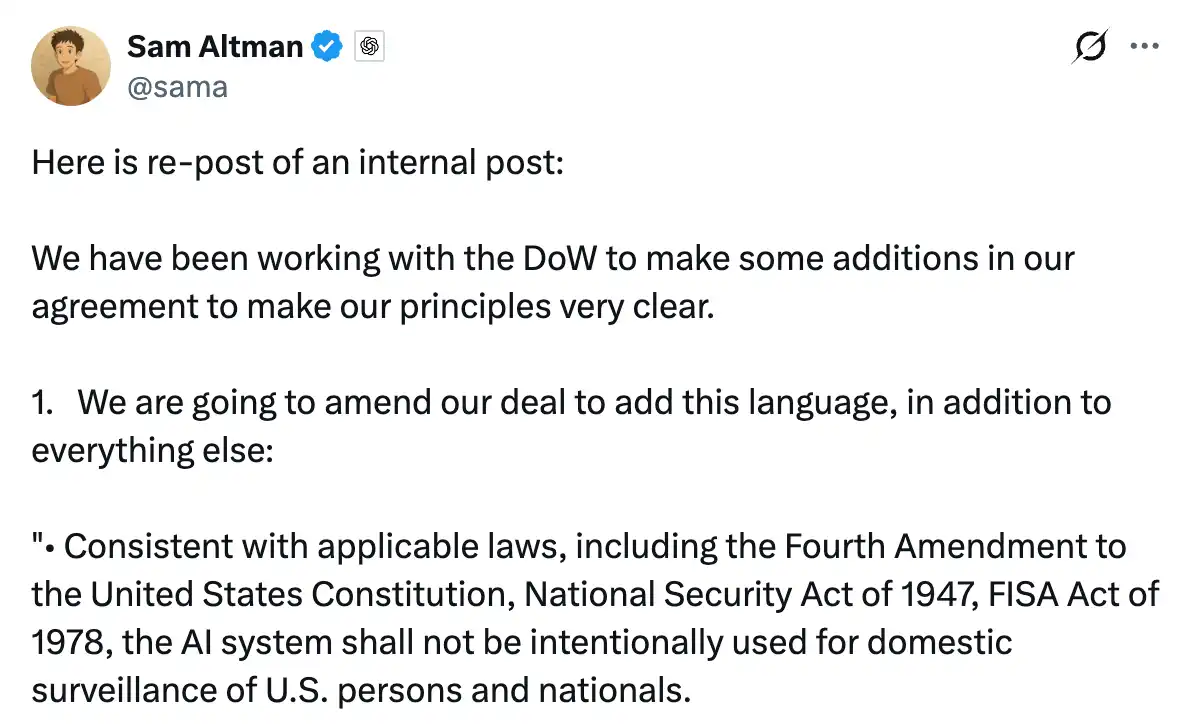

On Saturday morning, Ultraman forwarded a screenshot of an internal letter on X.

The letter was written by him to OpenAI employees on Thursday night, stating that the company was in talks with the Pentagon and he hoped to help "ease the situation." He forwarded the letter with a few lines of explanation, essentially wanting to publicly clarify what had happened in recent days.

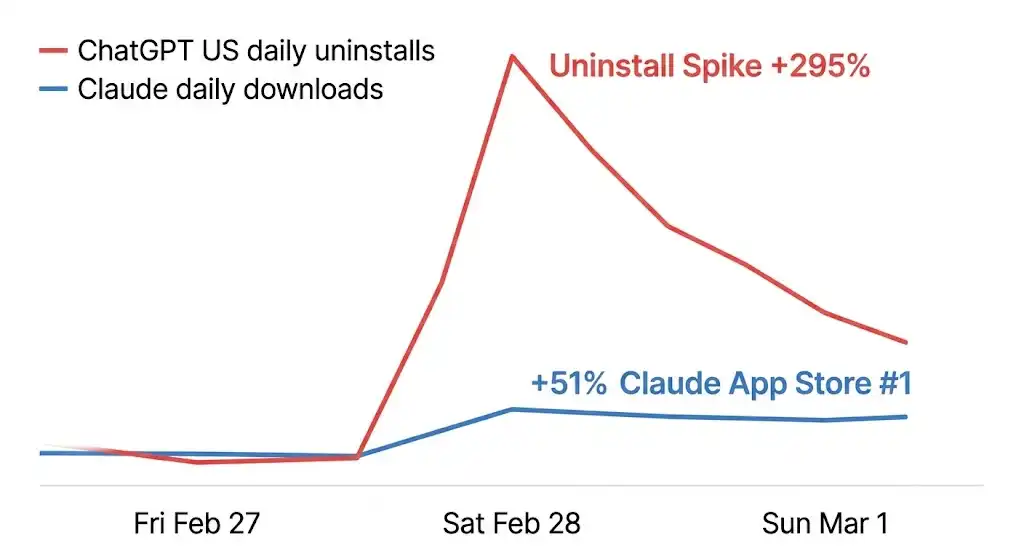

When he sent this tweet, Claude had already risen to number one on the free chart of the US App Store. Just the day before, ChatGPT was in that position.

Data from Sensor Tower recorded what happened in the following hours: on Saturday alone, the uninstall rate of ChatGPT in the US surged by 295% compared to a typical day, and one-star reviews skyrocketed by 775%. Meanwhile, Claude's download numbers rose by 51% in a single day. A wave of posts titled "Cancel ChatGPT" appeared on Reddit, with users sharing screenshots of unsubscribing, and someone commented, "fastest install of my life." A website called QuitGPT.org went live, claiming that 1.5 million people had taken action.

On Monday, the influx of users caused a massive outage for Claude. The company, designated by the federal government as a "supply chain security risk," crashed under the pressure of users flocking to it.

Precise Product Counterattack

On the same day that the uninstall wave brewed, Anthropic launched a memory migration tool.

The function itself is not complicated. Users copy a prompt into ChatGPT, allowing it to output all stored memories and preferences, and then paste it into Claude, which imports everything with one click, picking up where the user left off with ChatGPT. The official website copy has just one sentence: "switch to Claude without starting over."

The timing of this tool is its most critical attribute.

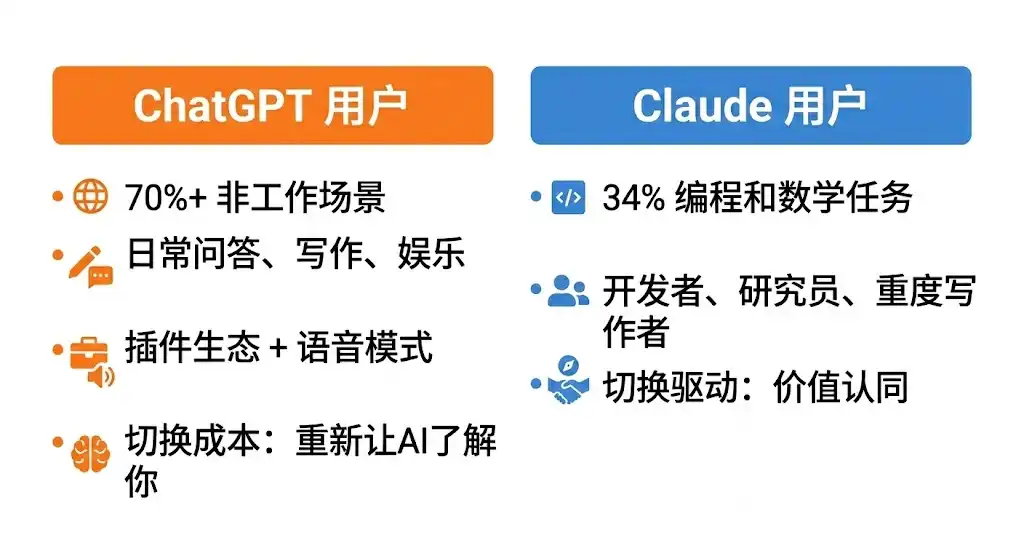

OpenAI's own data indicates that by mid-2025, over 70% of ChatGPT users' usage scenarios will be non-work-related, including daily Q&A, writing, entertainment, and information retrieval. It is the first AI that many people are exposed to, relying on a massive plugin ecosystem, Voice Mode, and deeply integrated third-party applications incorporated into daily life. The switching cost for these users isn't just "downloading a new app," but rather retraining an unfamiliar AI from scratch to understand who they are. The accumulation of memory was the strongest justification for staying.

Anthropic's own research shows that usage scenarios for Claude are highly concentrated. Programming and mathematical tasks account for 34%, making it the single largest category, while education and research have grown the fastest over the past year. The core users are developers, researchers, and heavy writers; this group is more rational and is more likely to switch tools based on clear value judgments as long as the migration cost is low enough.

The memory migration tool has minimized this cost. At the same time, Anthropic announced that the memory function would be fully opened to free users, a feature that was previously exclusive to paying customers.

However, a considerable portion of the incoming users were not Claude's original target audience.

From feedback on social media, a large number of ordinary users migrating from ChatGPT often react with: "It's not the same." Some find Claude's responses deeper and that it pushes back rather than simply agreeing with everything. Others notice that it has a cleaner approach to writing but doesn't generate images and lacks an interactive experience like Voice Mode.

Some originally sought a "more obedient replacement for ChatGPT" but found Claude's personality stronger, requiring time to adapt. A migration guide from TechRadar was widely shared in these days, titled "I wish someone had told me these things," with the core message that the usage logic of Claude and ChatGPT is fundamentally different, with the former resembling a principled work partner and the latter more like an all-purpose assistant.

This difference was originally the positioning of the two products but was unexpectedly amplified by this incident. Users flocked to Claude due to moral positions and then discovered a product that did not meet their expectations, a more discerning and boundary-aware AI. This was initially a potential reason for churn, but at this unique time, it became a reason to stay: if you trust a company's stance, it's easier to accept the logic of its products.

Days after the launch, Anthropic released data indicating that the number of free active users had grown over 60% since January, and daily new registrations had quadrupled. Claude experienced downtime due to overwhelming traffic, with thousands of users reporting login issues, which were resolved within hours.

Three Words in the Contract: What OpenAI Said and Did

Anthropic is the first commercial company to deploy an AI model on the US military's classified networks, with the collaboration achieved through Palantir, valued at about $200 million. However, the relationship between the two parties has continued to deteriorate over the past few months. The core of the controversy is a clause: the Pentagon requires AI models to be open to "all lawful uses" without any conditions. Anthropic insists on stating two exceptions: it cannot be used for large-scale surveillance of US citizens and must not be used for fully autonomous weapon systems.

Around February 20, reports surfaced that an Anthropic executive questioned the use of Claude during the January military operation to capture Venezuelan President Maduro, raising concerns with the partner Palantir, which the military strongly objected to. On Thursday, the Pentagon issued an ultimatum, giving Dario Amodei until 5 PM that day to respond.

Amodei issued a statement before the deadline, stating that the company could not accept the current terms, "not because we oppose military uses, but in certain rare cases, we believe AI could undermine rather than uphold democratic values." Trump immediately announced that federal agencies must cease using Anthropic products within six months, and Hegseth classified it as a "supply chain security risk," a label typically used for foreign competitor companies. The contract was thus terminated.

The vacant position was quickly filled. Later that same day, OpenAI announced a contract with the Pentagon. In Ultraman's internal letter on Thursday, his stance was still clear; he wrote that this had become "an industry-wide issue," stating that OpenAI and Anthropic held the same "red lines": opposing large-scale surveillance and fully autonomous weapons. On Friday, the agreement was reached to deploy models on military classified networks, limiting them to cloud operation, assigning engineers to oversee, and stating that the same two restrictions were detailed in the contract.

Ultraman later opened a Q&A session on X, answering for several hours. Someone asked him: why did the Pentagon accept OpenAI but ban Anthropic? His answer was: "Anthropic seems to focus more on the specific prohibitive clauses in the contract rather than citing applicable laws, while we feel satisfied citing the laws."

This statement addresses methodological differences, but it reveals the true controversy at the heart of the matter.

The key to Anthropic's negotiation breakdown was the phrase insisted upon by the Pentagon: AI systems may be used for "all lawful purposes." Anthropic's refusal was based on the reasoning that this phrase does not establish a fixed boundary in the context of national security. Existing laws have not kept pace with AI's capabilities in many respects; the definition of "lawful" will be determined by the government's interpretation. OpenAI signed this phrase while asserting that it mentioned similar protections in the contract.

Legal experts then analyzed the publicly available contract terms from OpenAI, pointing out two specific phrasing issues.

The surveillance clause states that the system may not be used for "unconstrained" surveillance of private information of US citizens. Samir Jain, Vice President of Policy at the Center for Democracy and Technology, pointed out that the wording here implies that "constrained" versions of surveillance are permitted. Under the existing legal framework, the government can legally purchase citizens' location records, browsing history, and financial data from data brokers, allowing AI to analyze this data, which technically does not constitute "illegal surveillance." Amodei later cited this very example in an interview with CBS.

The weapons clause states that the system may not be used for autonomous weapons "in situations where laws, regulations, or departmental policies require human control." This qualification means that the restriction only applies where other regulations have already mandated human control, and its binding nature entirely relies on existing policy. The Pentagon has the authority to modify its internal policies at any time. Legal scholar Charles Bullock wrote on X that the weapons clause in the contract relies on DoD Directive 3000.09, which requires commanders to retain "appropriate levels of human judgment," and this "appropriate level" is a standard that can be flexibly interpreted.

OpenAI's response to these concerns is that models may only operate in the cloud, structurally eliminating the possibility of being directly integrated into weapon systems. The contract also explicitly states specific legal bases, which are more binding than textual prohibitions because the law is a verified framework. Ultraman himself acknowledged during the Q&A: "If we need to fight this battle in the future, we will, but this clearly poses some risks for us."

This is not a matter of one company being willing to compromise while the other holds firm to principles; it is a fundamental difference in security philosophy. OpenAI's bottom line is: I will not engage in illegal activities. Anthropic's bottom line is: I will not engage in actions that are not yet legally prohibited but that I believe should not be done.

This divergence has left a fissure within OpenAI as well. Last week, multiple OpenAI employees signed an open letter supporting Anthropic's stance against being labeled a supply chain risk. Alignment researcher Leo Gao publicly questioned whether the company's contract provided adequate protection. The sidewalk outside OpenAI's San Francisco office bore chalk criticisms. Meanwhile, supportive messages appeared outside Anthropic's office. Ultraman's extended X Q&A session largely addressed the group within his company who were originally aligned with Anthropic.

Two Outcomes from the Same Narrative

For years, Anthropic has defined its safety mission as "preventing civilization-scale risks," equating the potential threats of cutting-edge AI with nuclear weapons, positioning itself as the gatekeeper on this frontline. This narrative is core to its brand and a way to earn trust in the capital markets.

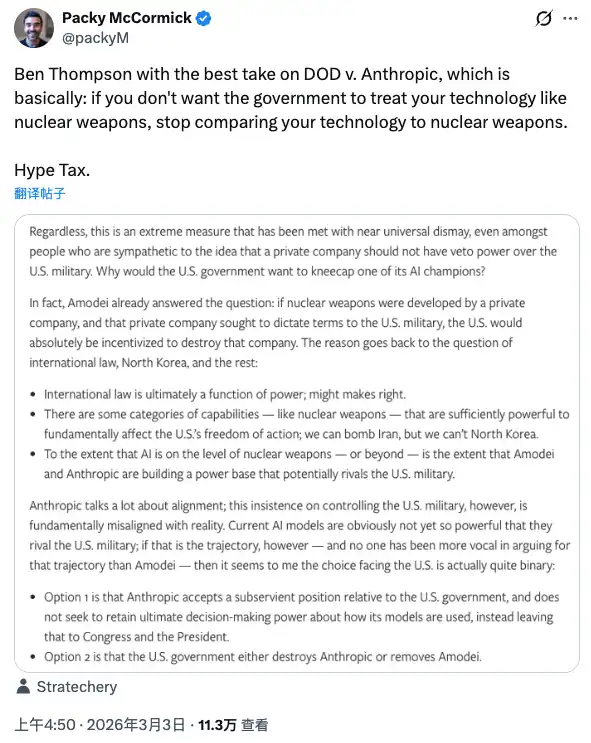

Tech commentator Packy McCormick referenced a concept from Ben Thompson during the evolution of this incident: Hype Tax. This means that if you build your influence through extreme narratives, when this narrative confronts real power, you will have to pay the price. If you compare AI technology to nuclear weapons, the government will treat you the same way it treats nuclear weapons.

Anthropic has paid the price for this narrative: losing a contract, being labeled a security risk, being named by the president, and all its products required to be removed from federal systems within six months.

But on the same weekend, this narrative produced a completely opposite effect on another dimension.

Ordinary users do not see contract terms, nor legal interpretations, nor debates about security philosophy. They see: one company said no and was kicked out by the government. Another company said yes and secured a contract. They made their choice using their own judgment framework, resulting in a 295% uninstall rate, app store number one, and crashed servers.

This is a rare collective moral statement from consumers in the AI industry.

Anthropic did not spend a dime on PR for this matter. Amodei's statement was restrained, not calling for user support, not naming OpenAI, and not positioning itself as a martyr. Yet the outcome occurred.

One noteworthy detail is that the incident that drew users towards Claude was essentially OpenAI doing something entirely reasonable from a business perspective: signing a contract while a competitor was banned and the contract was in limbo, while claiming to have discussed the same protective terms. Ultraman also made it clear that part of his motivation was to help ease the situation and prevent further harm to Anthropic.

Regardless of the motive, the result is that OpenAI secured a contract, and Anthropic's user base grew. Both sides incurred costs, and both sides gained benefits, just measured in different units.

There is one more thing worth mentioning here.

The Pentagon contract that Anthropic lost is valued at approximately $200 million.

Anthropic's current annualized revenue is $14 billion. The goal is to reach $26 billion by 2026.

Last month, Anthropic just completed a $30 billion Series E financing, with a valuation of $380 billion.

This calculation is not difficult. But another unanswered question remains: when AI is indeed used extensively for military decision-making, will those "technical guardrails" and assigned engineers written into the contract be effective, whether for OpenAI’s or for what Anthropic originally requested?

This question is not found in any publicly available contract.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。