Author: Salad Dressing

Desire and color are inherent to our nature. Most great business models rise from this fact, and AIGC is no exception.

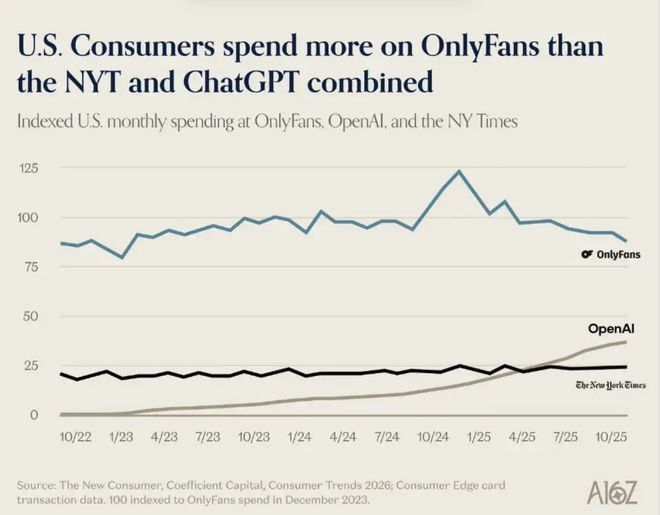

A16Z, a leading VC in Silicon Valley's investment circle, released a report on AI consumption trends. This report, which was supposed to seriously discuss AI productivity, hides a page with a humorous line chart: last year, the money spent by American users on OpenAI and The New York Times combined was still less than what they spent on OnlyFans.

A16Z Report Table

It’s ironic and very real — productivity is less compelling than sexual tension.

So, how much can be earned by skirting around AI?

Image Source Giphy

Productivity Is Less Than Sexual Tension

The first wave of people creating AI virtual models knows this best.

Starting from the end of 2022, as tools like Midjourney and Stable Diffusion could produce images stably, some realized: this technology could create lifelike human faces, capable of mass production at nearly zero cost. They used AI to generate virtual female images, assigned names, created character backstories, and a few well-designed "daily life" snippets to operate on Instagram and TikTok as if they were real people, with intimate replies in private messages handled by ChatGPT, offering something called a "girlfriend experience." The entire process was almost fully automated, and the mastermind behind it didn't even need to show his face.

Image Source Giphy

This method runs most smoothly on Fanvue, a competitor platform to OnlyFans. Fanvue has a more lenient attitude towards AI content; according to their official disclosure, by November 2023, AI virtual models had already contributed 15% of the platform's total revenue. By 2024, top AI virtual models were earning monthly incomes generally over $20,000, and some well-operating accounts exceeded $200,000 in annual income. By 2025, this figure continued to rise. According to Fanvue CEO Will Monange in an interview in 2025, the overall income of AI creators on the platform grew by more than 60% compared to the same period in 2024, and virtual models became the fastest-growing content category on the platform.

OnlyFans officially prohibits AI content, but there are always loopholes being exploited. Discussions on Reddit often arise about how to make money on OnlyFans using AI, a common method being to find real women for face verification, then train AI models using their photos to produce content in bulk.

Image Source Giphy

No matter how strict the platform is, it can't hold back technological progress; the level of AI-generated images is now so lifelike that even seasoned professionals find it hard to distinguish. Just a few days ago, I came across a video of a handsome guy sitting in a car on Xiaohongshu; if it weren't for the top comment in the comment section saying, "This AI aesthetic is really nice," I wouldn’t have realized it was an AI character.

Beyond adult content, there’s another group making money with AI, going in a completely different direction: children’s picture books.

Zhao Lei (pseudonym) is one of the earliest entrants in this field. At the end of 2022, he was just laid off from a product position at a big company and was researching new paths at home. At that time, Midjourney had just begun to stabilize in producing images, and looking at the watercolor-style small animals generated, he had a thought: isn't this just picture book illustration? He spent two weeks researching Amazon KDP, with a very simple logic: ChatGPT writes the story, Midjourney creates the images, upload for formatting, and wait for payments. "It was really easy to profit back then," he said, "with a few books stacked up, I could have over ten thousand in passive income in a month."

But the opportunity didn’t last long. In the second half of 2023, AI picture books on KDP began to grow explosively, with nearly ninety thousand similar tutorials popping up on TikTok, all with titles like: EASY AI Money, earn one hundred thousand a month from children's picture books.

Everyone rushed into the same track, rapidly diluting sales. Quality issues then emerged, with AI-generated picture books featuring dinosaurs with giant front legs and children with mismatched numbers of fingers. Major platforms began requiring users to declare whether AI was used during uploads, effectively ending this track. "Now making money with AI picture books is already very difficult," Zhao Lei said.

Then he and the batch of people skirting around AI, coincidentally reached the same conclusion: selling courses (in this regard, the recent viral "lobster" has achieved perfection).

Image Source Giphy

Zhao Lei sells “the complete process from zero to shelf for AI picture books,” while those on the fringes sell “AI virtual model building tutorials,” with buyers being the next batch of people who just heard of this and still think the window hasn’t closed.

Two different tracks, two sets of content, different packaging, but selling the same thing: the illusion of “I can also be a pig that flies.”

Aesthetic and “Old Skills” Have Stuck Many People

These sound like businesses making money at the right time, but what are the actual barriers?

A UX designer friend once gave me an answer: internet regional restrictions and membership fees. When Midjourney first came out, she wrote an operational guide, priced at 99 yuan, which is still listed on Xiaohongshu for passive income. From the perspective of tool usage, she’s right — the barriers are indeed rapidly lowering.

But as someone whose drawing skills are stuck at stick figures and who repeatedly produces bad images in various AIGC tools, I must add a barrier she didn’t mention: aesthetics.

Image Source Giphy

People used to joke that AI could not replace designers because clients often don't know what they want. I thought it was a joke until I personally used these tools, realizing that the joke applied to me word for word.

Last year, I made a media account and wanted to create a logo using the concept of "accumulated islands." Accumulated islands can be understood as those things worth retaining in the chaotic flow of information. I found a reference image for this concept, opened the tool, inserted the image, and added a bunch of descriptive prompts to generate illustrations. The results were a chaotic mess; I modified it seven or eight times, each version varying in its chaos. I knew I wanted a certain feeling, but had no idea how to translate that feeling into commands. In the end, I found a designer friend to help; she spent twenty minutes, and the version she produced was in a completely different league from my two hours of effort.

The above image is before modification, and the below image is after modification.

The problem isn't with the tool, but with me. More accurately, it's about my inability to convert vague aesthetic feelings in my brain into precise language.

This dilemma isn’t just mine.

A friend involved in content operations started using Seedance for short videos last year; she quickly learned to use the tool, but what truly held her back was writing storyboards. “I know I want a shot with texture, but 'texture' doesn’t do anything when included in a prompt,” she said, “I don’t know what that texture is in terms of light, framing, or camera movement.” In the end, what she produced, she described as “somewhat similar but wrong in every aspect.”

Another friend using Marble for content creation, a tool that generates 3D visuals from text and images, repeatedly generated and discarded images, only to realize after a long time that he had no frame of reference and didn’t know what “good” looked like, making it impossible to judge whether the generated output was what he wanted.

Marble generates a 3D panoramic view.

In stark contrast, a friend with photography experience using the same tools produced noticeably higher quality results. He said he didn’t spend much time studying prompt techniques, “I just know what kind of composition and lighting I want, and if I articulate those clearly, the tool will naturally deliver accurately.”

The capabilities of tools are rapidly improving, but the gap between users hasn't narrowed as a result; in fact, in some ways, it has widened. In the past, everyone struggled to create good things; now, those with aesthetic accumulation can produce great results, while those without are still lingering between “usable” and “good to use.”

Tools are also responding to this reality. The rise of one-click template tools like NotebookLM is based on a simple logic: it bypasses the premise of “you must first know what you want.” Templates assist with aesthetic decisions, and all you need to do is fill in the content. However, the limitations of templates are apparent; they can solve the “usable” problem but cannot ensure “aesthetic appeal.”

This issue is equally evident in the field of writing. I have a friend working in market planning, recently reassigned to handle PR, needing to produce a large volume of written materials. Her supervisor said AI could be used, which confused her more, leading her to ask me for an AI writing manual I had previously written. The crux of the matter was: she had no sense of what “a good PR piece” entailed and did not know the standards for quality. Facing AI-generated content, she couldn’t determine how to revise it in the right direction.

Image Source Giphy

On the other hand, I find using AI for writing to be much smoother. It’s not because I understand the tools better; it's because I have years of experience as a writer, giving me judgment over expression. I know what makes a sentence good or awkward, recognize where AI-generated content falls short, and where it needs to be pushed. Aesthetic skills here have become a very practical ability: they guide you to know where the end goal lies, rather than aimlessly prompting AI to run repeatedly.

When the capability of tools is not the issue, aesthetics and “old skills” become the biggest barrier — using them poorly may even be worse than not using them at all.

What I Want is Desire, Is the Difference Between AI and Real People Important?

Those who first seize opportunities not only gain benefits but also face controversies. A paradoxical phenomenon has emerged in the current AIGC circle: whether to use AI is more important than the quality of the work.

Fang Yuan (pseudonym) is a brand designer; he took on a visual brand project and compressed a two-week process into three days using AI tools, feeling that the outcome was significantly better than before. He sent the work out and waited for a reply.

The first response was not feedback on the work but rather, “So quick, did you use AI?” Before Fang Yuan could reply, another message followed: “We do not accept any designs that involve AI.” To this day, he is still unsure whether the client even opened the attachment. He feels frustrated, having been penalized for high efficiency.

Image Source Giphy

He is not alone in facing this situation. AI has quietly become a moral judgment coordinate in many people's evaluation systems. This is different from Photoshop or Excel. No one would question the creator of a retouched photo, “Did you use photo editing software?” nor would anyone ask when receiving a financial report, “Did you calculate this using Excel?”.

AI triggers a different skepticism, closely approaching the question of “Did you really do this?”

In creative work, there has always been an implicit contract; a good piece signifies that someone has invested time, effort, and refinement. However, the emergence of AI disrupts the causal link that everyone tacitly assumes exists between “input” and “output.”

An item created using AI over three days alongside one created manually by someone else over two weeks may have identical quality, but the former will feel “off” in some way. This “offness” can be summed up as “unfair.”

The University of Arizona conducted a study showing that if designers proactively inform clients they used AI assistance, even after explaining that AI played only a supportive role, clients’ trust in designers still dropped an average of 20%.

As AIGC technology matures, this issue is evolving from a personal trust problem between parties to a platform issue.

Starting in 2023, the government has gradually issued relevant regulations requiring labels for AI-generated content: first came the "Regulations on the Management of Deep Synthesis in Internet Information Services" in January, mainly governing deep synthesis technologies like face swapping and voice synthesis; later, in August of the same year, the "Interim Measures for the Management of Generative Artificial Intelligence Services" were formally implemented, incorporating generative services like ChatGPT. By March 2025, regulatory measures were again upgraded, with the National Internet Information Office and multiple departments releasing the "Identification Measures for Artificial Intelligence-Generated Synthetic Content," expanding the regulations to cover all forms of content, including text, images, audio, and video.

However, what cannot be clearly defined is the definition itself.

Platforms can identify a video that is 100% AI-generated, but judging the gray areas is tricky. A selfie edited by AI in terms of color and composition—does it count as AI-generated content? A video that has self-shot material but edited and scored by AI—does it need a label? An article where AI produced a draft and a person modified 70% of it—who should receive the label…

Image Source Giphy

The challenge of delineating boundaries actually boils down to issues of accountability and responsibility. When definitions are unclear, responsibilities lack grounding. If a song’s melody is written by AI, while a person rewrites the lyrics, who is held accountable in a copyright dispute? Or if a review is AI-generated and the blogger only alters the tone, if a recommended product turns out to be misleading, the question "Was this done by AI?" actually probes a more fundamental question: Is there a person seriously accountable behind this work? Is there someone thinking about your concerns, caring about the outcome?

The hardest boundary to draw is not the definition but the responsibility.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。